Inference reimagined, from Kernel to Cloud. One unified stack.

Your model, any compute, one platform. Run AI across GPUs and CPUs - engineered for the most demanding inference workloads, from kernel to cloud.

playground

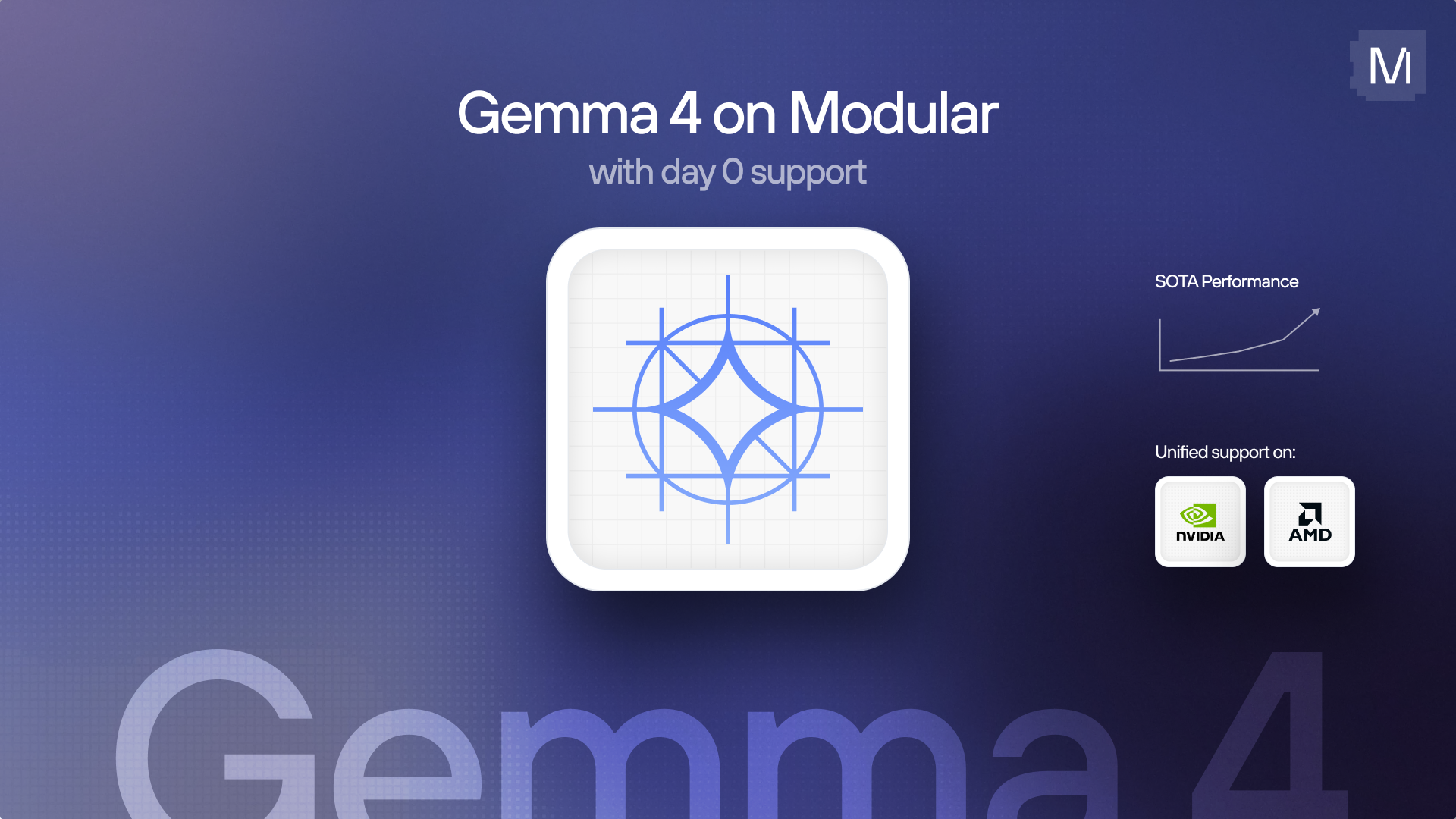

LATEST MODEL 🚀

Try Modular right here 👇

Generate images and text (videos coming soon), then request access for a full account with API token. Request a demo to get priority access.

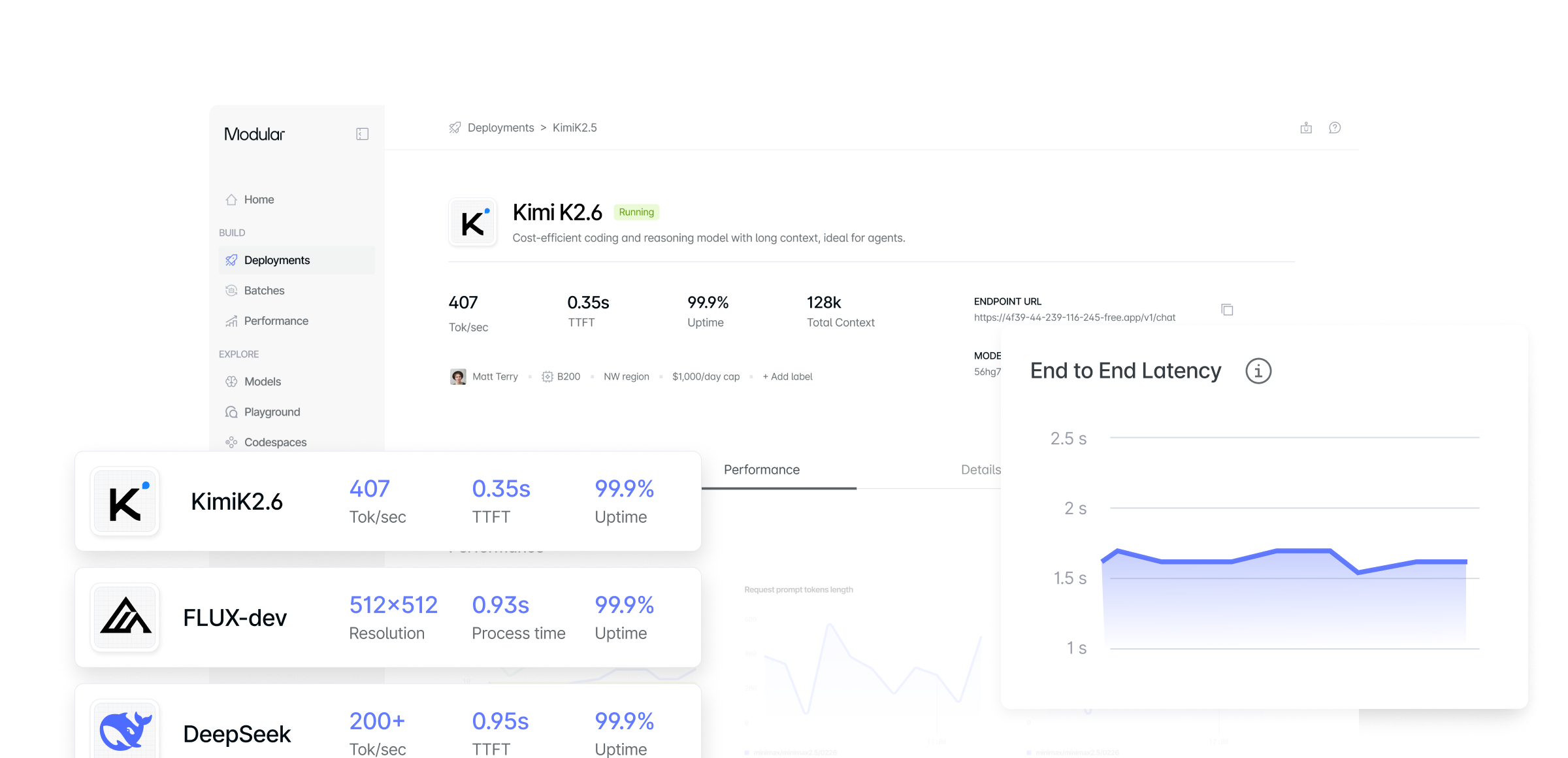

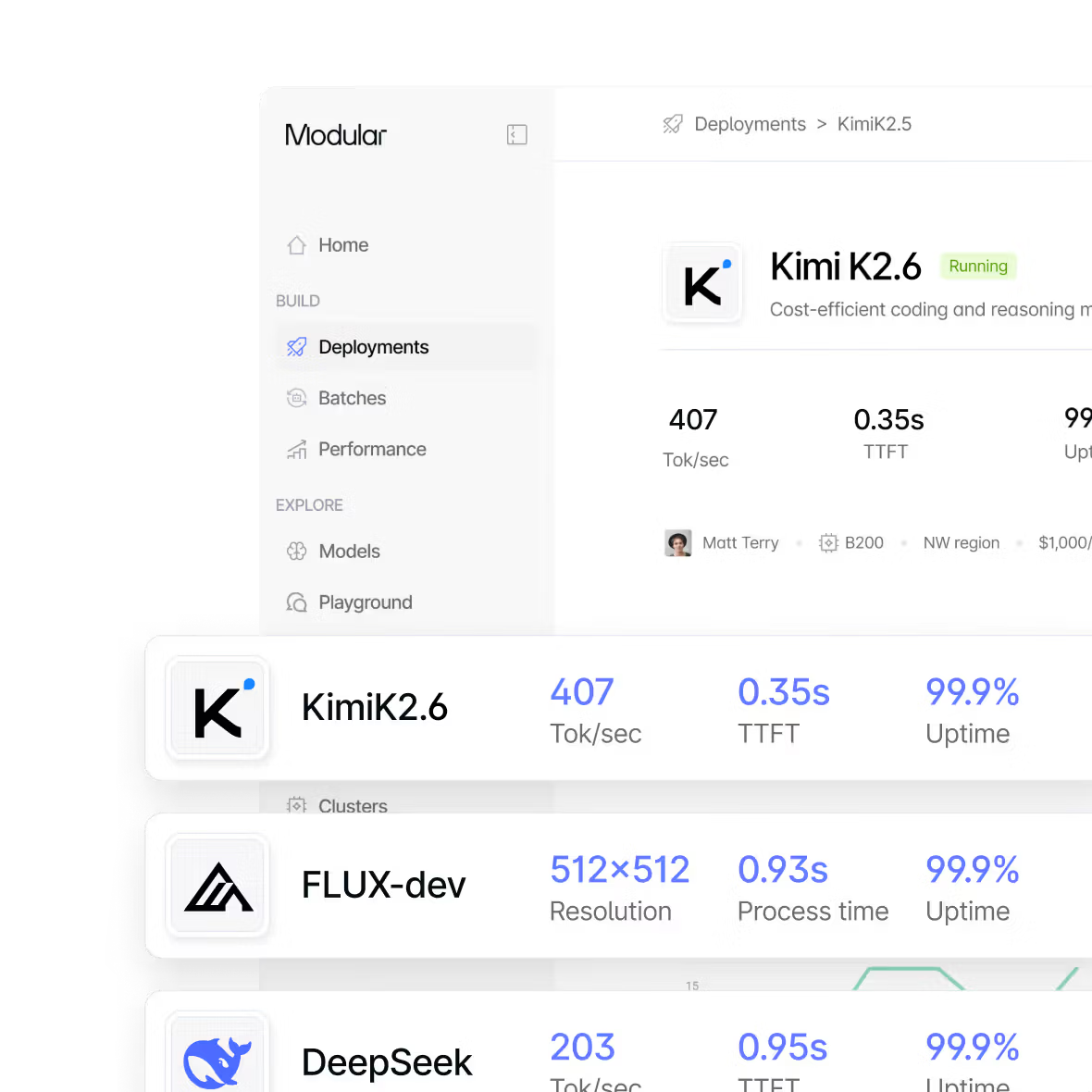

The Modular Platform

A unified AI inference platform for high-performance, portable compute - enabling full optimizations from GPU kernel to API endpoint.

Our unified infrastructure optimizes your AI pipeline with full-stack optimizations across text, image and video.

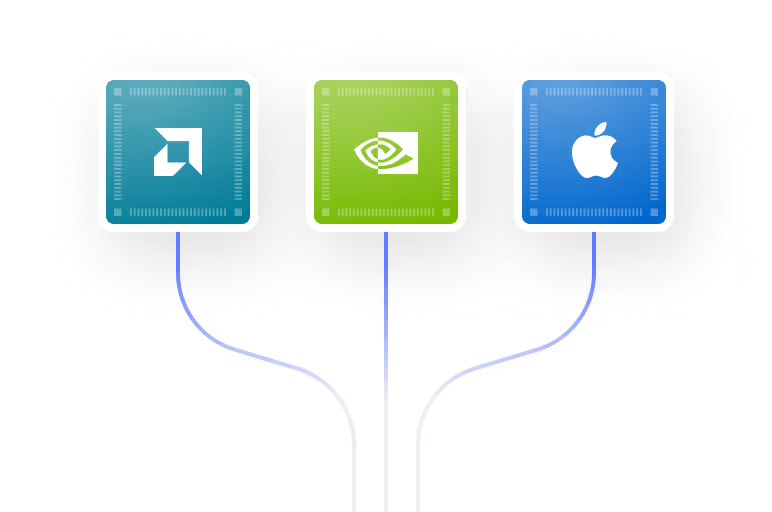

Same model, same codebase - seamlessly runs across NVIDIA, AMD, Intel, ARM, and Apple Silicon. True hardware portability.

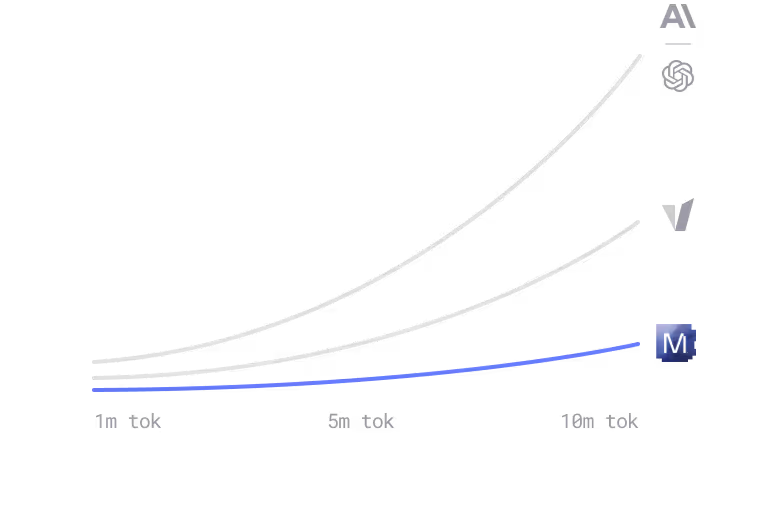

Higher GPU utilization, faster model compilation & runtime, and dynamic hardware selection means savings compound at scale.

We’re built different.

A unified AI inference stack giving you total control.

Most AI infrastructure today is assembled from parts that were never designed to work together: one tool for serving, another for optimization, another for custom kernels, something else for scaling. Every layer you add is another place where things break.

We built Modular to fix that.

One unified stack from kernels to cloud, built from the ground up for heterogeneous compute. Scale AI from cloud to edge - CPUs, GPUs, and ASICs.

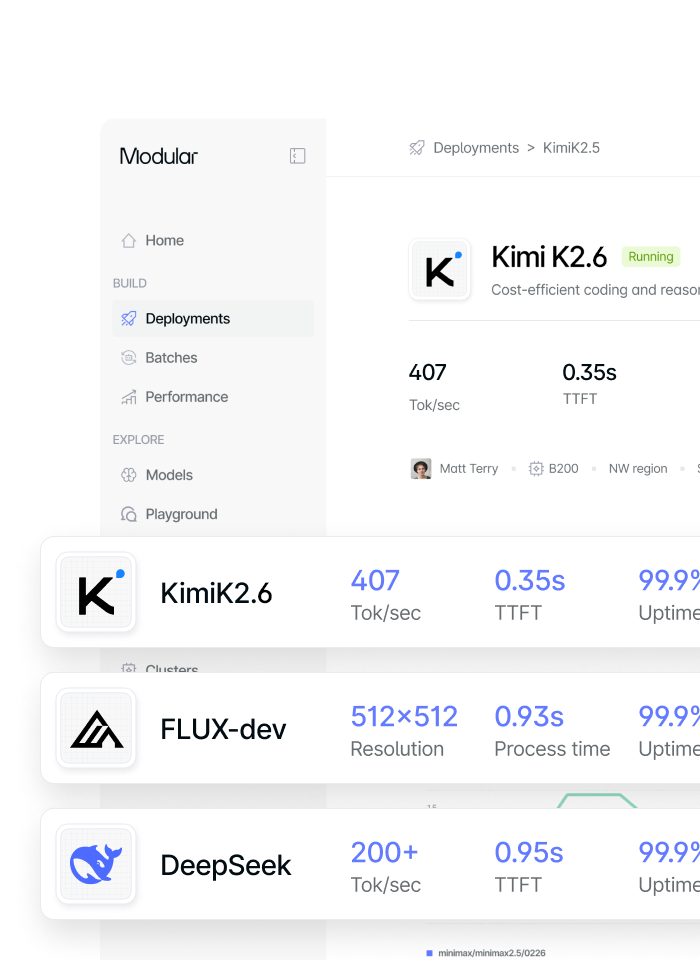

Run AI workloads at production scale with SOTA performance on NVIDIA and AMD GPUs in Modular’s hosted cloud or your VPC. Our full-stack approach enables complete workload customization, performance tuning, and deep observability.

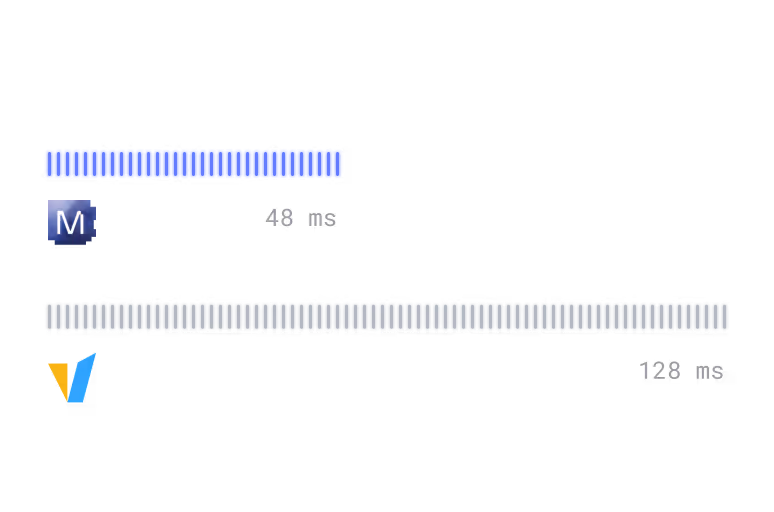

Our high-performance, hardware-agnostic serving framework, MAX, automatically optimizes kernels and request execution across accelerators. 2x performance improvement over vLLM on diverse hardware through a single container and OpenAI-compatible API.

Run 1000+ models like DeepSeek and Kimi out of the box with MAX. PyTorch-like model APIs and AI coding skills make it easy to port custom models in minutes.

100s of SOTA, composable kernels written in our high-performance systems language, Mojo. Extend or write custom GPU kernels for maximum performance across accelerators.

Modular was built to be natively heterogeneous. Run workloads seamlessly across NVIDIA, AMD, and Apple GPUs as well as Intel, AMD, and ARM CPUs.

Fast inference, deployed your way

Run top open models or your own custom models with flexible deployment — in our managed cloud or directly in your own VPC.

Inference Solutions

Shared endpoints

High-performance inference. No infrastructure to manage, no long-term commitments. Test easily. Per-token pricing.

Dedicated endpoints

Reserved NVIDIA and AMD GPUs. Per-minute pricing that’s easy and flexible.

Custom Models

Bring your own custom or fine-tuned models. Deploy on optimized infrastructure with per-minute pricing.

Deployment solutions

Fully managed by Modular + we handle SLAs and security so you don't have to. Best token economics on NVIDIA and AMD.

Your Cloud with diverse hardware support. Modular manages the control plane while inference runs in your VPC. You own the hardware, data, and cloud credits.

MAX and Mojo in a container, running on your infrastructure. NVIDIA, AMD & more - your hardware, your cloud, your rules.

Customer Stories

Top AI models, or your custom ones

Our forward-deployed engineers optimize every deployment for SOTA performance - whether you're running a top open model or a custom model.

- Build with popular models

- Build by specific use case

Scale to diverse hardware, seamlessly

Avoid vendor lock-in and GPU scarcity when you can deploy on whatever hardware that delivers the best price-performance for your workload — without rewriting code.

Write once, deploy everywhere

Breakthrough compiler technology that automatically generates optimized kernels for any hardware target.

Vendor Independence

Break free from GPU vendor lock-in. Modular delivers peak performance across NVIDIA and AMD.

Custom models are now easy. Try it.

Our agentic tooling and forward-deployed engineers help you port and deploy your models quickly - so you can evaluate performance and start running production workloads immediately.

Managed simplicity + Self-hosted control. Pick both.

Modular eliminates the tradeoff, providing the simplicity of managed inference with engineering-level control.

Dedicated endpoints with predictable performance

Forward-deployed engineers optimizing your workloads

Compiler-level optimizations that fuse the entire inference graph

Custom kernel programmability in Mojo & Python

GPU portability across NVIDIA and AMD without rewriting code

No black boxes. No vendor lock-in. No operational burden.

Get started with Modular

Schedule a demo of Modular and explore a custom end-to-end deployment built around your models, hardware, and performance goals.

Distributed, large-scale online inference endpoints

Highest-performance to maximize ROI and latency

Deploy in Modular cloud or your cloud

View all features with a custom demo

Book a demo

Talk with our sales lead Jay!

30min demo. Evaluate with your workloads. Ask us anything.

Book a demo for a personalized walkthrough of Modular in your environment. Learn how teams use it to simplify systems and tune performance at scale.

Custom 30 min walkthrough of our platform

Cover specific model or deployment needs

Flexible pricing to fit your specific needs

Book a demo

Talk with our sales lead Jay!

Run any open source model in 5 minutes, then benchmark it. Scale it to millions yourself (for free!).

Install Mojo and get up and running in minutes. A simple install, familiar tooling, and clear docs make it easy to start writing code immediately.

"Mojo gives me the feeling of superpowers. I did not expect it to outperform a well-known solution like llama.cpp."

“What @modular is doing with Mojo and the MaxPlatform is a completely different ballgame.”

"Mojo is Python++. It will be, when complete, a strict superset of the Python language. But it also has additional functionality so we can write high performance code that takes advantage of modern accelerators."

“I'm very excited to see this coming together and what it represents, not just for MAX, but my hope for what it could also mean for the broader ecosystem that mojo could interact with.”

“Mojo and the MAX Graph API are the surest bet for longterm multi-arch future-substrate NN compilation”

“Max installation on Mac M2 and running llama3 in (q6_k and q4_k) was a breeze! Thank you Modular team!”

“The Community is incredible and so supportive. It’s awesome to be part of.”

"It worked like a charm, with impressive speed. Now my version is about twice as fast as Julia's (7 ms vs. 12 ms for a 10 million vector; 7 ms on the playground. I guess on my computer, it might be even faster). Amazing."

"C is known for being as fast as assembly, but when we implemented the same logic on Mojo and used some of the out-of-the-box features, it showed a huge increase in performance... It was amazing."

"after wrestling with CUDA drivers for years, it felt surprisingly… smooth. No, really: for once I wasn’t battling obscure libstdc++ errors at midnight or re-compiling kernels to coax out speed. Instead, I got a peek at writing almost-Pythonic code that compiles down to something that actually flies on the GPU."

“A few weeks ago, I started learning Mojo 🔥 and MAX. Mojo has the potential to take over AI development. It's Python++. Simple to learn, and extremely fast.”

“Mojo can replace the C programs too. It works across the stack. It’s not glue code. It’s the whole ecosystem.”

"This is about unlocking freedom for devs like me, no more vendor traps or rewrites, just pure iteration power. As someone working on challenging ML problems, this is a big thing."

“I tried MAX builds last night, impressive indeed. I couldn't believe what I was seeing... performance is insane.”

“It’s fast which is awesome. And it’s easy. It’s not CUDA programming...easy to optimize.”

“I'm excited, you're excited, everyone is excited to see what's new in Mojo and MAX and the amazing achievements of the team at Modular.”

“Mojo destroys Python in speed. 12x faster without even trying. The future is bright!”

“The more I benchmark, the more impressed I am with the MAX Engine.”

Mojo destroys Python in speed. 12x faster without even trying. The future is bright!

“Tired of the two language problem. I have one foot in the ML world and one foot in the geospatial world, and both struggle with the 'two-language' problem. Having Mojo - as one language all the way through is be awesome.”

.png)