Enterprise innovation, supercharged by Modular

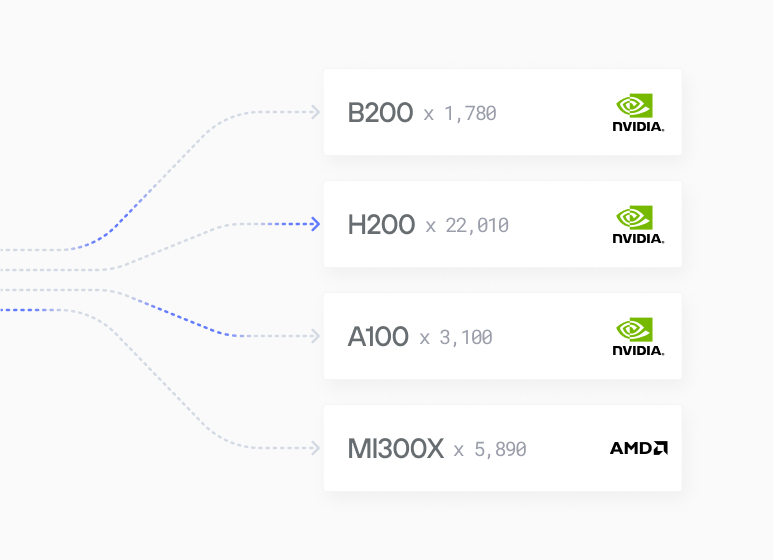

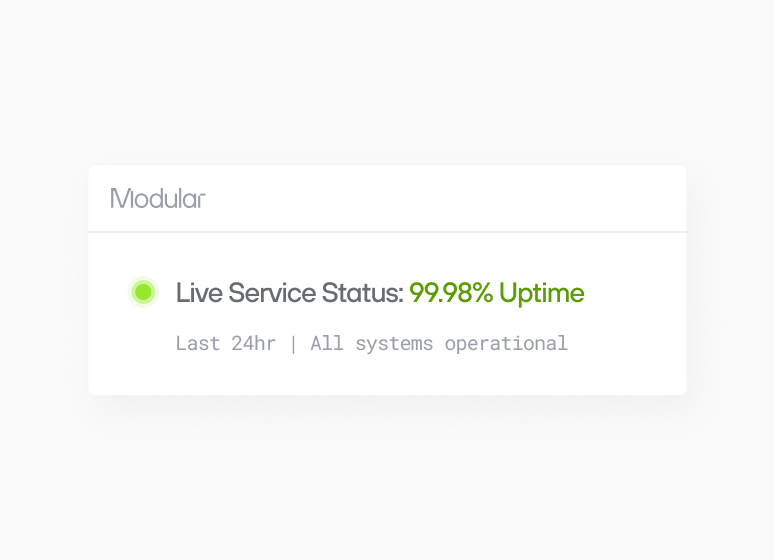

Modular delivers high-speed inference, cross-architecture flexibility, and SLA-backed reliability—so your teams can innovate faster and scale without surprises.

+80%

Faster

vs vLLM (0.13)

+70%

Cost reduction

vs vLLM (0.13)

2-5x

Faster from research to production

vs writing traditional kernels

Case Studies

Scales for enterprises

“I'm very excited to see this coming together and what it represents, not just for MAX, but my hope for what it could also mean for the broader ecosystem that mojo could interact with.”

“Tired of the two language problem. I have one foot in the ML world and one foot in the geospatial world, and both struggle with the 'two-language' problem. Having Mojo - as one language all the way through is be awesome.”

“Mojo can replace the C programs too. It works across the stack. It’s not glue code. It’s the whole ecosystem.”

"after wrestling with CUDA drivers for years, it felt surprisingly… smooth. No, really: for once I wasn’t battling obscure libstdc++ errors at midnight or re-compiling kernels to coax out speed. Instead, I got a peek at writing almost-Pythonic code that compiles down to something that actually flies on the GPU."

"This is about unlocking freedom for devs like me, no more vendor traps or rewrites, just pure iteration power. As someone working on challenging ML problems, this is a big thing."

"Mojo gives me the feeling of superpowers. I did not expect it to outperform a well-known solution like llama.cpp."

“It’s fast which is awesome. And it’s easy. It’s not CUDA programming...easy to optimize.”

“What @modular is doing with Mojo and the MaxPlatform is a completely different ballgame.”

“Mojo and the MAX Graph API are the surest bet for longterm multi-arch future-substrate NN compilation”

“Mojo destroys Python in speed. 12x faster without even trying. The future is bright!”

"Mojo is Python++. It will be, when complete, a strict superset of the Python language. But it also has additional functionality so we can write high performance code that takes advantage of modern accelerators."

"It worked like a charm, with impressive speed. Now my version is about twice as fast as Julia's (7 ms vs. 12 ms for a 10 million vector; 7 ms on the playground. I guess on my computer, it might be even faster). Amazing."

“I'm excited, you're excited, everyone is excited to see what's new in Mojo and MAX and the amazing achievements of the team at Modular.”

“Max installation on Mac M2 and running llama3 in (q6_k and q4_k) was a breeze! Thank you Modular team!”

“I tried MAX builds last night, impressive indeed. I couldn't believe what I was seeing... performance is insane.”

“The Community is incredible and so supportive. It’s awesome to be part of.”

“The more I benchmark, the more impressed I am with the MAX Engine.”

“A few weeks ago, I started learning Mojo 🔥 and MAX. Mojo has the potential to take over AI development. It's Python++. Simple to learn, and extremely fast.”

Mojo destroys Python in speed. 12x faster without even trying. The future is bright!

"C is known for being as fast as assembly, but when we implemented the same logic on Mojo and used some of the out-of-the-box features, it showed a huge increase in performance... It was amazing."

Sign up today

Signup to our Cloud Platform today to get started easily.

Sign Up

Browse open models

Browse our model catalog, or deploy your own custom model

Browse models