Blog

Democratizing AI Compute Series

Go behind the scenes of the AI industry with Chris Lattner

Latest

Why LLM Inference Needs a New Kind of Router - Part 3

Most routing stacks ship with a fixed set of algorithms: round-robin, least-requests, consistent hashing, etc. These are generally independent implementations rather than composable components. As a result, when a customer asks for "consistent hashing with a concurrency cap" or "cache-aware with session stickiness," it requires adding a new algorithm from scratch. Disaggregated prefill/decode increases this proliferation. Every variant traditionally has its own HTTP handler, discovery logic, proxy code, and session management. That requires hundreds of lines of additional plumbing per variant.

Three trends from MLSys 2026

MLSys 2026 provided an excellent overview of the current state of inference across research and industry. With six sessions on LLM serving this year (twice as many as last year) the program covered opportunities and challenges at the core of Modular’s recent work. Modular was glad to sponsor the conference, and our team noted three trends that stood out across the talks, posters, and keynotes. These are all topics that Modular has been addressing from first principles, with the advantage of our unique stack.

Why LLM Inference Needs a New Kind of Router - Part 2

To route a request to the pod with the best cached prefix, you need to know which blocks are cached on which pod. That sounds simple until you look at the numbers. You may have hundreds of pods, each with thousands of cached blocks. State can change hundreds of times per second. Across this complexity, queries need to return in microseconds because they sit on the critical path of every inference request.

Hippocratic AI partners with Modular to power flexible, high-quality inference for real-time patient conversations

Every millisecond matters in real-time voice, and at Hippocratic AI's scale latency gains compound directly into better patient experience and per-node efficiency. Production deployments run across multiple frameworks, including SGLang and vLLM, with ongoing evaluation of emerging frameworks for additional latency headroom, alongside a hardware roadmap spanning NVIDIA, AMD, and future-generation accelerators.

Translating to Mojo via AI Agents

At Modular, we’re always experimenting with the latest agentic programming tools, integrating the best ones into our workflows, and learning quite a few lessons along the way. One thing we realized is that the Mojo language is ideally suited to the needs of modern AI coding agents.

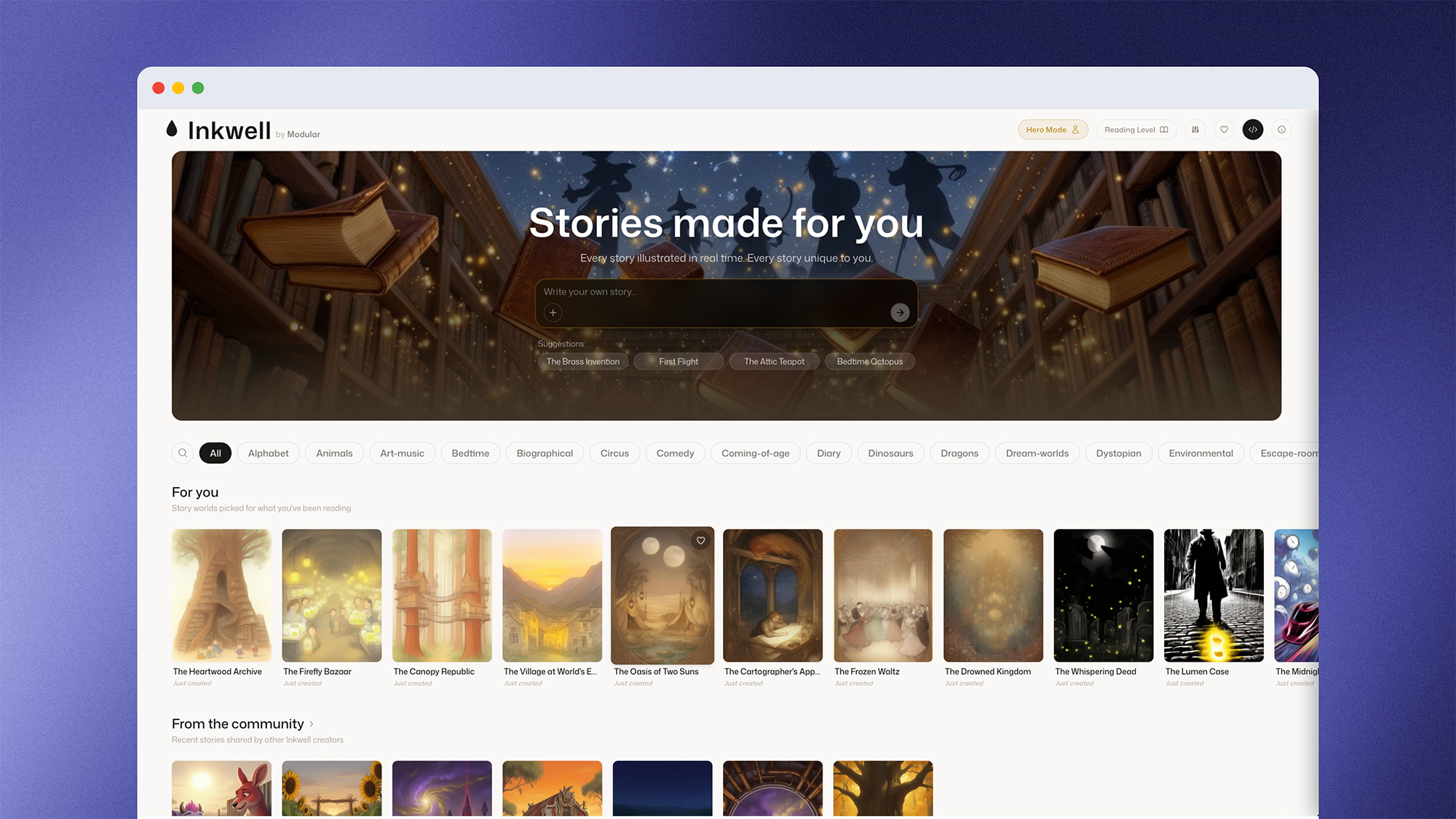

Inkwell: Why Your Inference Platform Matters As Much As Your Model

Inkwell is a web app that lets users create interactive storybooks with a custom character along infinite branching paths. When the user opens a story, the first page of text and image art streams in - text appears character-by-character via WebSocket within the first second, the illustration paints in as you read, and by the time you tap a choice, the next page is already written and illustrated. Creating a user experience around the seamless generation of new content requires an inference layer that can perform at scale.

Modular 26.3: Mojo 1.0 Beta, MAX Video Gen, and more

Surprise: Mojo 1.0 is officially in beta! Modular’s 26.3 release includes new features and modalities, but the headline is that we’ve officially hit beta for Mojo 1.0, with a clear plan to finalize Mojo 1.0 in the coming months. We share details below, alongside other key announcements in our 26.3 release including video generation in MAX with Wan 2.2 and MAX framework updates.

Modverse #54: AMD AI DevDay, New Modular Offices, and a Community That Keeps Shipping

There was a lot to celebrate in April: the community shipped GPU renderers, FFmpeg bindings, raylib wrappers, BLAS routines, and a 2D graphics API, just to name a few. The team connected with tons of developers at AMD AI DevDay and our joint meetup with AMD, two new Modular offices opened on two different continents, and Gemma 4 launched with same-day support on NVIDIA and AMD. Here’s the April roundup.

No items found within this category

We couldn’t find anything. Try changing or resetting your filters.

Sign up today

Signup to our Cloud Platform today to get started easily.

Sign Up

Browse open models

Browse our model catalog, or deploy your own custom model

Browse models