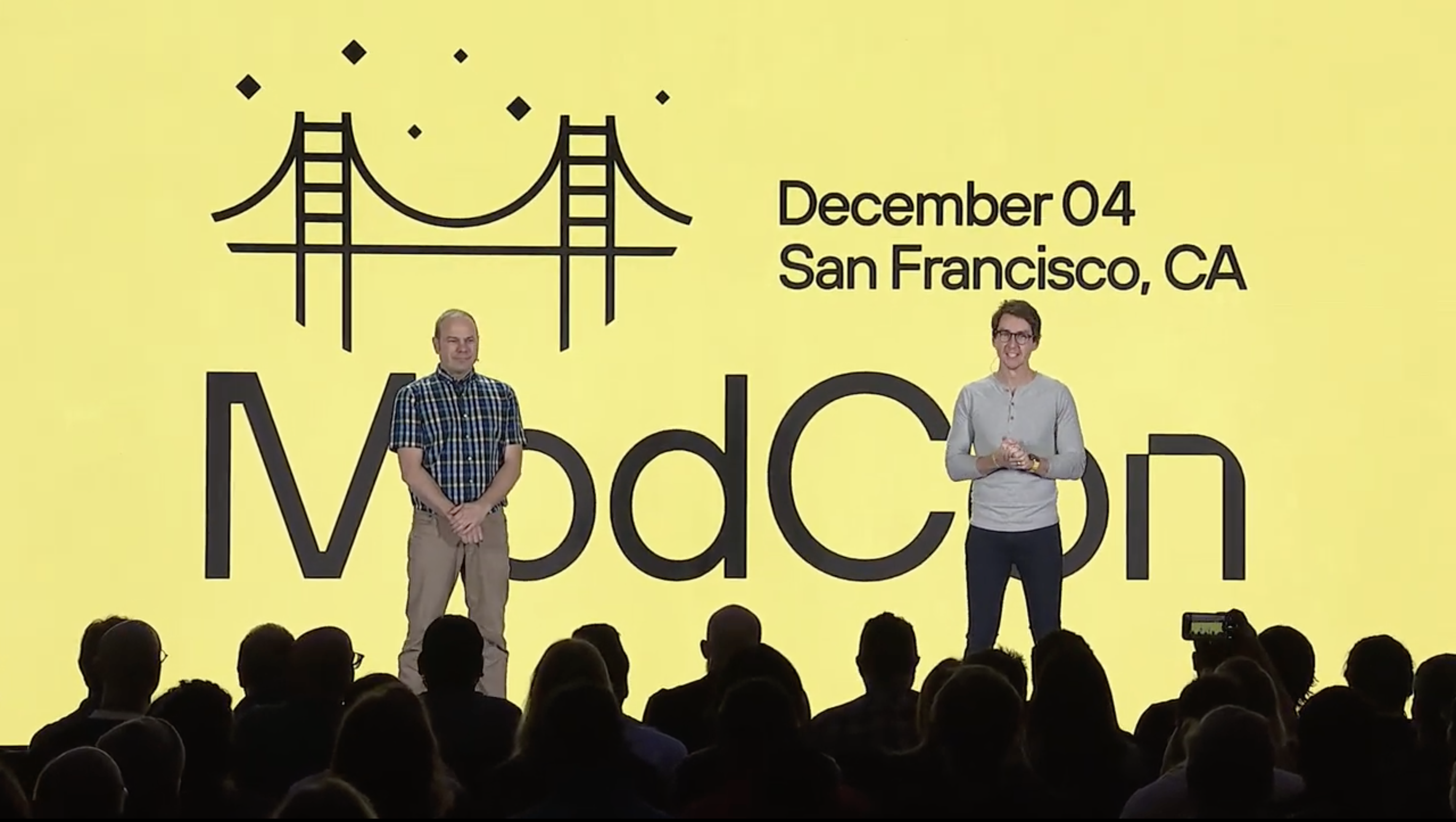

On Dec 4th, the Modular team took the stage at ModCon 2023 to deliver a keynote packed full of exciting announcements of new software, enterprise partnerships and community updates.

“Today we announced a completely new AI developer platform for the world called MAX, and we can’t wait for you all to use it. Our goal is to give AI developers everywhere superpowers to unlock new capabilities,” said Chris Lattner, co-founder and CEO of Modular.

“We want to create a more unified & simpler future for AI developers everywhere, and power the future of Generative AI for the world, and MAX will help developers everywhere do that,” added Tim Davis, co-founder and president of Modular

We shared 4 key announcements:

- Modular Accelerated Xecution (MAX): An integrated, composable suite of products that simplify your AI infrastructure and give you everything you need to deploy low-latency, high-throughput generative and traditional inference pipelines into production. MAX will be available in a free, non-commercial Developer Edition and a paid, commercial Enterprise Edition in early 2024.

- A strategic partnership with Amazon Web Services (AWS) to bring MAX Enterprise Edition exclusively to AWS production services everywhere, including unparalleled performance on the AWS Graviton platform.

- A technology partnership with NVIDIA to bring all the benefits of their accelerated compute platform to MAX, unifying and simplifying heterogeneous CPU+GPU development for AI developers everywhere.

- Major new features: MAX Engine extensibility with Mojo, MAX Engine GPU support, release of Mojo SDK v0.6 and open-sourcing of Mojo documentation. We are also immediately expanding our Early Access Program for MAX Engine – sign up now!

ModCon 2023 was Modular’s first ever developer conference and featured some of the most influential AI leaders and researchers in the world – Bratin Saha (VP, AWS), Kari Ann Briski (VP, NVIDIA), Bryan Catanzaro (VP, NVIDIA), Lex Fridman (AI Researcher, MIT), Shawn (Swyx) Wang (Latent.Space), Damien Sereni (PyTorch Director, Meta), Jeremy Howard (Fast.ai), and Michele Catasta (VP, Replit) to name a few.

Over 350 people attended ModCon in-person and thousands more watched the keynote on livestream. If you missed the keynote, you can catch the replay on YouTube.

Let’s recap the key announcements.

MAX: Modular Accelerated Xecution

The top highlight of ModCon 2023 was the announcement of MAX: the Modular Accelerated Xecution platform. MAX is composed of the MAX Engine, MAX Serving, and the Mojo programming language – everything you need to deploy low-latency, high-throughput inference pipelines into production.

- MAX Engine (formerly AI Engine) is a unified inference library that optimizes and executes AI models from popular AI frameworks like TensorFlow, PyTorch, and ONNX, across all CPU architectures and NVIDIA GPUs with unparalleled performance and cost savings. MAX Engine supports all your generative and traditional AI workloads with drop-in compatibility.

- MAX Serving is a unified serving framework built to serve models with the MAX Engine. MAX Serving provides drop-in compatibility with existing inference servers such as NVIDIA Triton, TF Serving and TorchServe, and integrates easily into existing container orchestration services such as Kubernetes.

- Mojo🔥is the first programming language purpose-built for AI. It combines the usability of Python with the performance of C. Mojo provides a unified programming model across CPUs and GPUs. MAX Engine’s Mojo API can be used to define model graphs and extend models with custom operations, allowing you to write entire ML pipelines in one language, making it ideal for taking AI from research to production.

“GenAI is nearly impossible to scale, even for the world's largest and most sophisticated companies,” shared Eric Johnson, Head of Product at Modular. “MAX delivers unparalleled performance, usability, and extensibility so you can actually bring GenAI to production, economically and efficiently. MAX gives your AI engineers superpowers, enabling them to innovate, develop and deploy models faster than ever before.”

MAX will be available in both developer and enterprise editions starting in early 2024. Register at modul.ar/max to be notified of when MAX is generally available.

Modular x AWS partnership

We announced an exclusive partnership with Amazon Web Services, the world’s leading and largest cloud server provider. Together, we are bringing the benefits of the MAX Platform to AWS production services everywhere, powering innovative AI features for billions of users around the world.

”At AWS we are focused on powering the future of AI by providing the largest enterprises and fastest-growing startups with services that lower their costs and enable them to move faster. The MAX Platform supercharges this mission for our millions of AWS customers, helping them bring the newest GenAI innovations and traditional AI use cases to market faster,” said Bratin Saha, AWS VP of Machine Learning & AI services.

Read more about our partnership on the AWS x Modular Partnership blog post.

Modular x NVIDIA partnership

We announced an exclusive technology partnership with NVIDIA, the world leader in AI accelerated computing to bring the power of the Modular Accelerated Xecution (MAX) Platform to their AI systems. This will enable developers and enterprises to build and ship AI into production like never before, by unifying and simplifying the AI software stack through MAX, delivering unparalleled performance, usability, and extensibility to make scalable AI a reality.

MAX GPU provides state-of-the-art support for NVIDIA H100, H200, A100, and L40 series GPU accelerators, along with the new Grace CPU Superchip. This hardware will be well integrated with all components of MAX, including MAX Engine, MAX Serving and Mojo, and will bring unparalleled heterogeneous computing to AI. Developers now have one toolchain that scales to all their use AI cases - GenAI and other AI alike - unlocking novel CPU+GPU programming models for unrivaled performance and lower cost.

“Developers everywhere are helping their companies adopt and implement generative AI applications that are customized with the knowledge and needs of their business,” said Dave Salvator, director of AI and Cloud at NVIDIA. “Adding full-stack NVIDIA accelerated computing support to the MAX platform brings the world’s leading AI infrastructure to Modular’s broad developer ecosystem, supercharging and scaling the work that is fundamental to companies’ business transformation.”

Read more about our partnership on the NVIDIA x Modular Partnership blog post.

MAX Engine supercharged with new capabilities

MAX Engine is an AI model compiler and high-performance runtime that delivers state-of-the-art inference performance on popular AI models. Today, we announced three exciting new capabilities in MAX Engine that improves its performance and usability:

- MAX Engine extensibility with Mojo

- MAX Engine Graph API

- MAX GPU

MAX Engine extensibility with Mojo

New APIs that allow developers to leverage Mojo to easily extend models with custom operations. With this capability, you no longer need to write code in C++ and CUDA, build shared library dependencies or load them at runtime. With MAX Engine and Mojo, the workflow is streamlined: simply write all custom operations in Mojo and the MAX Engine will natively analyze the operations and perform graph fusions to create a highly-optimized model for improved performance.

“MAX increases AI model performance while simultaneously reducing AI stack complexity,” said Chris. “It unlocks AI researchers and kernel developers who want to deliver the highest level of inference performance without dependencies”

MAX Engine Graph API

We also announced MAX Graph APIs enabling developers to define models directly in Mojo. These simple APIs allow users to define models as a graph using operators (e.g., Matrix Multiply, ReLU etc.) without any dependencies and execute the graph directly with the MAX Engine. MAX Engine performs graph optimization and kernel generation for improved runtime performance. For models that require pre- and post-processing steps that are not part of the model, the Graph API lets you extend the model with custom pre- and postprocessing ops – all in Mojo.

MAX GPU

Today, we announced that MAX supports NVIDIA GPUs to accelerate the most demanding AI workloads. MAX GPU support gives you a single programming language across GPUs and CPUs with different architectures and a consistent set of developer tools. This means you don’t have to re-write projects for different hardware or maintain different AI/ML stacks – one for CPUs and another for GPUs.

With MAX GPU support, Mojo code "just works" on GPUs, and lowers the barrier to GPU programming with high-level abstractions. By leveraging Mojo, MAX GPU allows you to program high-performance code for NVIDIA GPUs and gives you full control over GPU-specific primitives. It also has out-of-the-box integration into the vast array of NVIDIA developer tools for GPUs.

MAX GPU is currently in preview, stay tuned for more details at NVIDIA GTC in March 2024.

Release of Mojo SDK v0.6 and open-sourcing Mojo documentation

Today, we announced the release of Mojo SDK v0.6 with a slew of exciting features in the core language and standard library. Head over to developer.modular.com to download Mojo SDK v0.6 today! For a full list of new features, changes and bug fixes check out the changelog. Here is a quick summary:

Traits

The most anticipated feature in the Mojo community has arrived! Traits, introduced in this release, allow expressing generic functions without sacrificing performance. Traits are one example of how we bring proven techniques from languages like Rust to Mojo, enabling Python developers to learn and benefit from modern language technologies.

For a deeper discussion on Mojo traits, check out the examples in the Mojo manual and changelog. We also have a developer blog post on this topic coming soon! Subscribe to our newsletter to be notified.

Mojo CLI, REPL and Language Server enhancements

New features and enhancements don’t stop at core language and the standard library. Mojo SDK v0.6 also improves the Mojo REPL, Mojo Language Server and Mojo CLI. The Mojo REPL environment now supports indented expressions. And the Mojo Language Server now shows documentation when using code completion on modules or packages in import statements. It also adds support for processing code examples, defined as markdown Mojo code blocks. Mojo CLI also includes improvements to how it processes command line arguments.

Open-source Mojo documentation

From the beginning we’ve shared our plans with respect to open-sourcing Mojo, and we’ve also discussed this in our Q&A livestreams and FAQ section in the documentation.

Today, we announced that Mojo documentation is now open-source. And we’re committing to open-sourcing the Mojo standard library in Q1 2024. We look forward to community contributions to documentation starting today and standard library in the near future. Join the Modular community on GitHub and Discord.

Stay tuned for a deeper dive blog post on Mojo SDK v0.6 features and upcoming Modular community Q&A livestream where you can get your questions answered by the Mojo team.

♥️ Modular Community Updates ♥️

Our community members inspire us every day to build amazing products. In just 6 months our community of developers has grown to 150k developers from over 50k enterprises globally. And we’re just getting started!

Since making the Mojo SDK available to developers in September, we’ve seen over 56k downloads and some amazing community projects — such as llama2.mojo — in just 2 months. To celebrate community projects and shine much deserved light on them, we launched the Modular Weekly Developer Newsletter and our community spotlight blog posts.

Modular community on Discord has grown to 22k+ members from more than 150 countries. This is where our community members come together everyday to learn, share feedback, help each other and collaborate on amazing projects. We’re ever grateful for the amazing Mojo champions Aydyn, Lukas, Kent, Mitchell and Maxim for dedicating their free time to the community on Discord. We also started regular Modular Community Q&A live streams with Modular engineers and community guests to showcase their projects, answer questions, share Modular roadmaps and updates.

We’d like to extend our deepest gratitude to each and every member of our community for your support, feedback, enthusiasm, and commitment. 🙏

We’re just getting started

We are incredibly excited to share these updates with you, and can’t wait for you to try MAX early next year. Sign up to be notified when MAX becomes available in early 2024: modul.ar/max

Mojo SDK v0.6 is available today at developer.modular.com and Mojo documentation is now open-source at modul.ar/github

If you’re at ModCon 2023, don’t forget to attend sessions from AI leaders and researchers. Here’s your guide to all the sessions at ModCon: https://www.modular.com/blog/modcon-2023-sessions-you-dont-want-to-miss

Recordings of talks will be made available on YouTube, subscribe to Modular Newsletter or the Modular YouTube channel to be notified when the recordings are available.

Until next time! 🔥