Blog

Democratizing AI Compute Series

Go behind the scenes of the AI industry with Chris Lattner

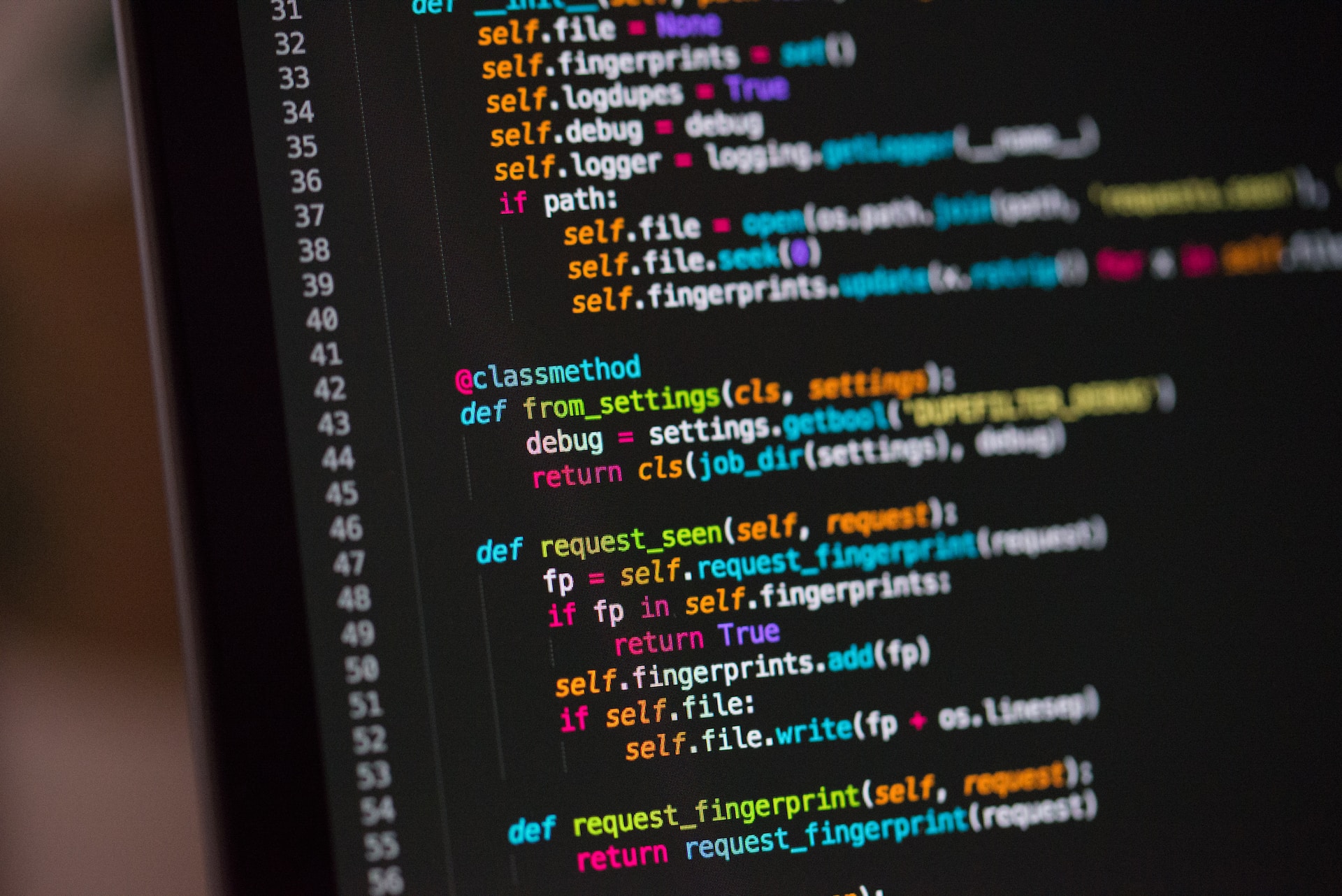

An easy introduction to Mojo🔥 for Python programmers

Learning a new programming language is hard. You have to learn new syntax, keywords, and best practices, all of which can be frustrating when you’re just starting. In this blog post, I want to share a gentle introduction to Mojo from a Python programmer’s perspective.

Modular natively supports dynamic shapes for AI workloads

Today’s AI infrastructure is difficult to evaluate - so many converge on simple and quantifiable metrics like QPS, Latency and Throughput. This is one reason why today’s AI industry is rife with bespoke tools that provide high performance on benchmarks but have significant usability challenges in real-world AI deployment scenarios.

.jpeg)

AI’s compute fragmentation: what matrix multiplication teaches us

AI is powered by a virtuous circle of data, algorithms (“models”), and compute. Growth in one pushes needs in the others and can grossly affect the developer experience on aspects like usability and performance. Today, we have more data and more AI model research than ever before, but compute isn’t scaling at the same speed due to … well, physics.

If AI serving tech can’t solve today’s problems, how do we scale into the future?

The technological progress that has been made in AI over the last ten years is breathtaking — from AlexNet in 2012 to the recent release of ChatGPT, which has taken large foundational models and conversational AI to another level.

Part 2: Increasing development velocity of giant AI models

The first four requirements address one fundamental problem with how we've been using MLIR: weights are constant data, but shouldn't be managed like other MLIR attributes. Until now, we've been trying to place a square peg into a round hole, creating a lot of wasted space that's costing us development velocity (and, therefore, money for users of the tools).

Increasing development velocity of giant AI models

Machine learning models are getting larger and larger — some might even say, humongous. The world’s most advanced technology companies have been in an arms race to see who can train the largest model (MUM, OPT, GPT-3, Megatron), while other companies focused on production systems have scaled their existing models to great effect. Through all the excitement, what’s gone unsaid is the myriad of practical challenges larger models present for existing AI infrastructure and developer workflows.

Democratizing Compute

Go behind the scenes of the AI industry in this blog series by Chris Lattner. Trace the evolution of AI compute, dissect its current challenges, and discover how Modular is raising the bar with the world’s most open inference stack.

Matrix Multiplication on Blackwell

Learn how to write a high-performance GPU kernel on Blackwell that offers performance competitive to that of NVIDIA's cuBLAS implementation while leveraging Mojo's special features to make the kernel as simple as possible.

Structured Mojo Kernels

Learn how Mojo simplifies GPU programming with modular kernel architecture, compile-time abstractions, and zero-cost performance across modern GPU hardware.

Software Pipelining for GPU Kernels

Explore software pipelining for GPU kernels from first principles. We formalize dependencies as a graph, solve for the optimal schedule with a constraint solver, and show how it all integrates into MAX via pure Mojo.

No items found within this category

We couldn’t find anything. Try changing or resetting your filters.

Sign up today

Signup to our Cloud Platform today to get started easily.

Sign Up

Browse open models

Browse our model catalog, or deploy your own custom model

Browse models

.jpeg)