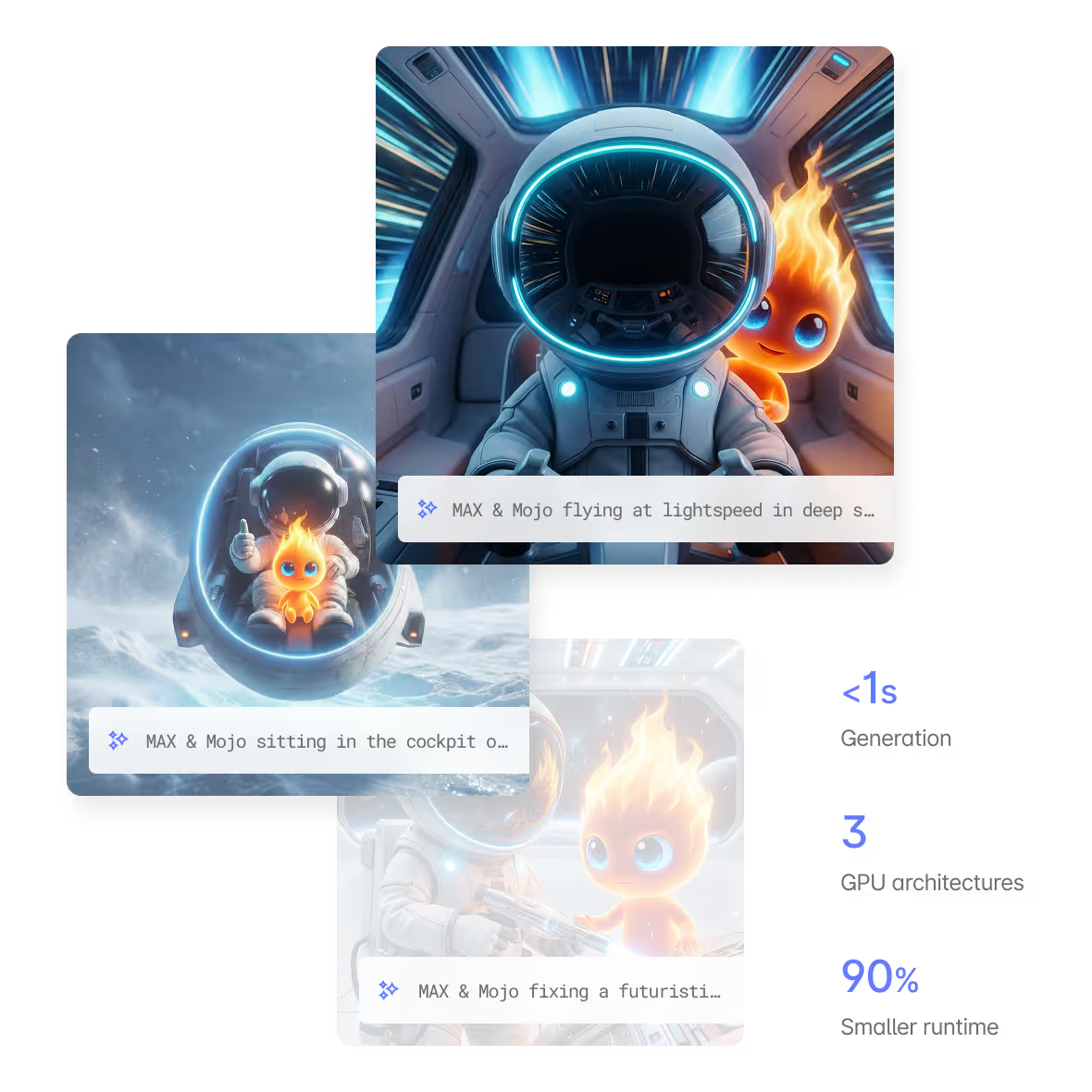

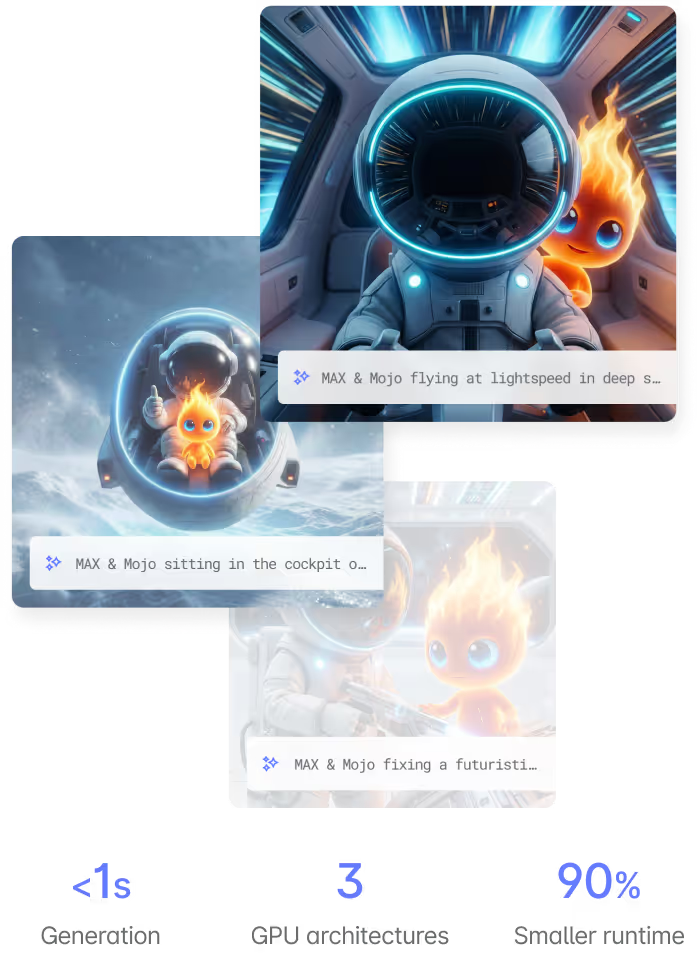

Image Generation at Full Speed on any hardware

Generate production-quality images with compiler-optimized inference. FLUX, Stable Diffusion, and custom diffusion models — running natively on NVIDIA, AMD, and Apple Silicon.

MODEL Spotlight

High-speed open-source image generation without sacrificing quality, optimized for rapid iteration and LoRA fine-tuning workflows. Generate images in under 1s with Modular.

In-context image editing with simple text instructions

Character consistency preservation across multiple edits

Local and global editing capabilities in one unified model

Text-to-image generation with state-of-the-art prompt following

Typography handling for text editing within images

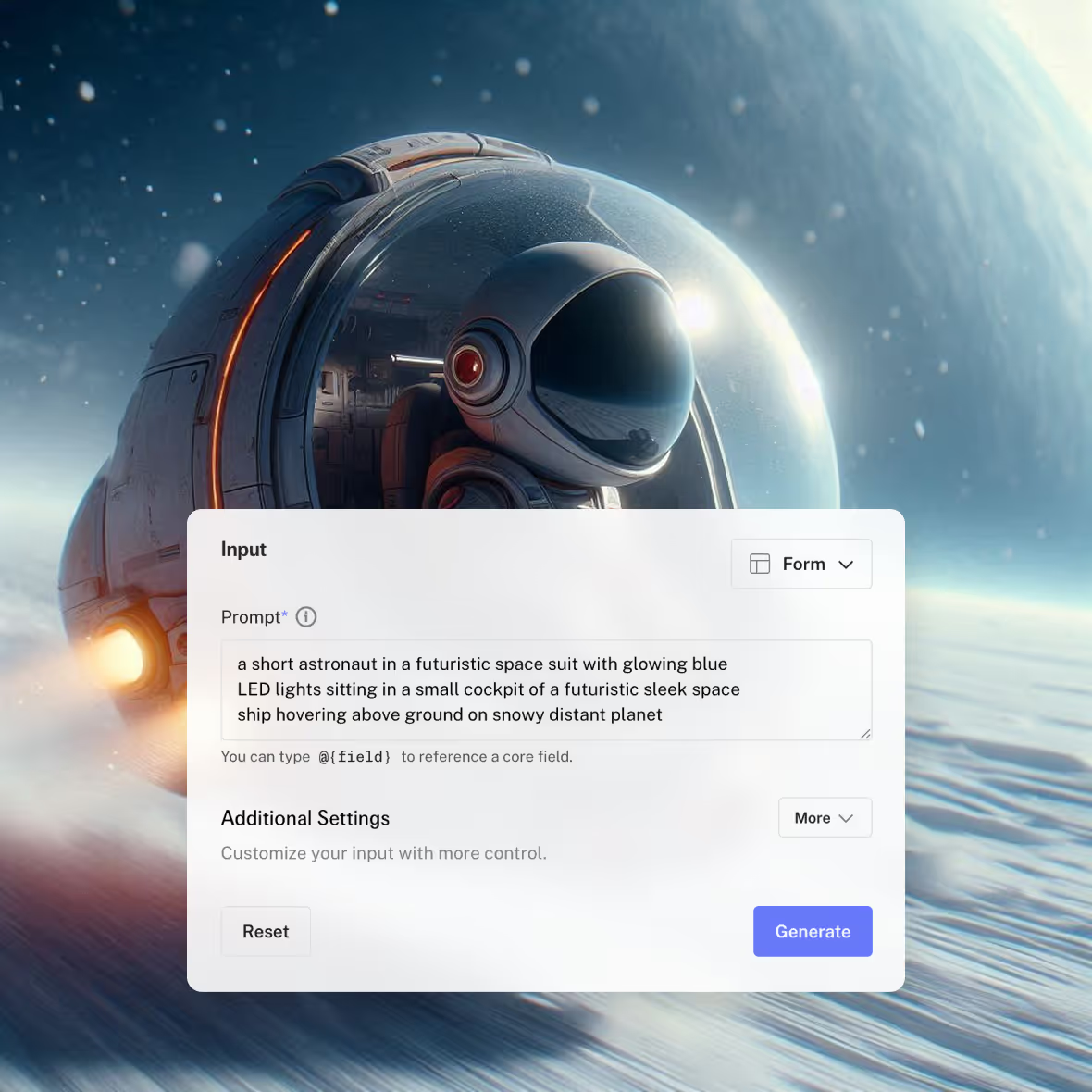

Give it try, right here 👇

Generate images and text (videos coming soon), then request access for a full account with API token. Request a demo to get priority access.

Why run image generation on Modular?

Every other image generation platform is locked to NVIDIA. Modular compiles diffusion models to run natively on any GPU — same model, same code, different silicon.

Native image and video support - not an afterthought

vLLM and SGLang were built for text. They don't support diffusion models out of the box. Running FLUX, Stable Diffusion, or video generation on those stacks means bolting on separate infrastructure, separate pipelines, separate ops. MAX serves image and video models natively alongside LLMs - same container, same API, same compiled performance.

VLLM AND SGLANG DON'T DO THIS. MAX DOES.

Up to 4x PyTorch performance

MAX's MLIR compiler fuses the entire diffusion pipeline - UNet/DiT, VAE, text encoder, scheduler - into a single optimized graph. The result is up to 4x faster inference than native PyTorch. Not from wrapping a runtime. From compiling every stage together and eliminating the overhead between them.

4X PYTORCH. COMPILED, NOT WRAPPED.

Compiler-fused diffusion pipelines

Diffusion inference isn't a single model call - it's dozens of denoising steps through a UNet or DiT, plus VAE decoding, text encoding, and scheduling. MAX compiles the full pipeline as one graph, fusing operations across steps and eliminating memory round-trips between stages. That's where the 4x comes from.

FULL PIPELINE COMPILED AS ONE GRAPH. THAT'S THE 4X.

Hardware-portable diffusion

Every other image generation platform is locked to NVIDIA. MAX compiles diffusion models to run natively on NVIDIA and AMD from the same container. Same model, same code, different silicon. When AMD offers better spot pricing for your batch generation workloads, shift without changing anything.

NVIDIA + AMD. SAME DIFFUSION PIPELINE. SAME CONTAINER.

Scale image generation in Our Cloud

Image generation traffic is bursty - a design tool launch, an API integration going live, a campaign spike. Modular Cloud's compiler-aware auto-scaling spins up replicas in seconds with a <700MB runtime. Scale to zero when idle. Dedicated endpoints on $/minute pricing for production, serverless for prototyping. Forward-deployed engineers tune batch sizes and scheduling for your specific traffic patterns.

BURST TO MEET DEMAND. SCALE TO ZERO WHEN QUIET.

FLUX, Stable Diffusion, and custom models ready to serve

Pre-optimized support for FLUX, Stable Diffusion XL, SD3, and more - deploy on Modular Cloud in minutes. Fine-tuned a diffusion model on your own data? Bring custom LoRAs, custom architectures, or proprietary models. MAX compiles and serves them with the same 4x performance advantage.

FLUX AND SDXL TODAY. YOUR CUSTOM MODEL TOMORROW.

Built for production image applications

Generate marketing visuals, product mockups, and social content at sub-second speeds. Serve from the GPU vendor with the best price-performance ratio at any given moment.

Consistent, low-latency avatar generation for gaming, virtual worlds, and social platforms. Run the same pipeline on NVIDIA in the cloud and Apple Silicon on-device.

Fine-tuned LoRAs, custom architectures, novel schedulers — deploy any diffusion model with Mojo kernels for maximum performance on any hardware target.

Modular vs. the competition

- Up to 4x PyTorch Performance

MLIR compiler fuses the full diffusion pipeline - UNet/DiT, VAE, text encoder, scheduler - into one optimized graph. Up to 4x faster than native PyTorch. Compiled, not wrapped.

- Native Image + Video Serving

MAX serves diffusion models natively alongside LLMs in the same container and API. vLLM and SGLang don't support image or video generation at all. No bolted-on pipelines.

- Hardware Portability

Run FLUX, SDXL, and custom diffusion models on NVIDIA and AMD from the same container. Shift workloads based on price-performance. No other platform offers portability like us.

- Faster & Lighter Runtime

Replicas spin up in seconds, not minutes. At image generation scale - where traffic is bursty and cold starts kill UX - this is the difference between usable and unusable.

- Fast, reliably cloud serving

Image generation traffic is bursty - a design tool launch, an API integration going live, a campaign spike. Our Cloud's aware auto-scaling spins up replicas in seconds.

- Alternatives

- 1x PyTorch Performance

Runtime wrappers over PyTorch and ComfyUI. No compiler-level optimization. No graph fusion across pipeline stages. You get whatever PyTorch gives you.

- Text-Only Inference Stacks

vLLM and SGLang were built for LLMs. Diffusion models require separate infrastructure, separate pipelines, separate ops. Two stacks to maintain. Two surfaces to break.

- Hardware lock-in

Every image generation alternative is locked to a single GPU vendor. Switching hardware means rewriting your stack. No pricing leverage. No supply flexibility.

- 7GB+ Runtime, Slow Cold Starts

Heavy dependency chains. Minutes to cold start. When a traffic spike hits your image API, users wait. At scale, that overhead compounds into real cost and real churn.

- No Kernel Access

Limited to framework-level configuration. Can't customize schedulers, attention, or quantization at the kernel level. What you get is what you get.

Get started with Modular

Schedule a demo of Modular and explore a custom end-to-end deployment built around your models, hardware, and performance goals.

Distributed, large-scale online inference endpoints

Highest-performance to maximize ROI and latency

Deploy in Modular cloud or your cloud

View all features with a custom demo

Book a demo

Talk with our sales lead Jay!

30min demo. Evaluate with your workloads. Ask us anything.

Book a demo for a personalized walkthrough of Modular in your environment. Learn how teams use it to simplify systems and tune performance at scale.

Custom 30 min walkthrough of our platform

Cover specific model or deployment needs

Flexible pricing to fit your specific needs

Book a demo

Talk with our sales lead Jay!

Run any open source model in 5 minutes, then benchmark it. Scale it to millions yourself (for free!).

Install Mojo and get up and running in minutes. A simple install, familiar tooling, and clear docs make it easy to start writing code immediately.