Faster AI coding infrastructure on any hardware

The inference backbone for AI-powered code editors and developer tools. Serve coding LLMs with compiler-optimized latency on NVIDIA, AMD, and Apple Silicon — from cloud to on-device.

Why AI coding companies choose Modular

Compiler-native speculative decoding

Code generation thrives on speculative decoding - draft tokens from a smaller model, verified by the full model in a single pass. MAX compiles both models and the verification logic as one fused graph through MLIR. No runtime coordination overhead between draft and target. Faster time-to-first-token and higher throughput on the long completions code generation demands.

DRAFT + VERIFY COMPILED AS ONE FUSED GRAPH

GPU vendor flexibility at scale

Code completion traffic is massive and sustained - every keystroke can trigger inference. Run on NVIDIA or AMD from the same container and shift workloads based on price-performance. When you're serving millions of completions per day, GPU vendor choice compounds into significant cost savings.

MILLIONS OF COMPLETIONS. GPU CHOICE MATTERS.

90% smaller serving footprint

Code generation at scale means thousands of model replicas. MAX's <700MB runtime versus 7GB+ alternatives means 10x faster replica spin-up, dramatically lower storage costs, and simpler orchestration. When a new model version drops, roll it out across your fleet in seconds, not minutes.

<700MB PER REPLICA. 10X FASTER ROLLOUTS AT SCALE.

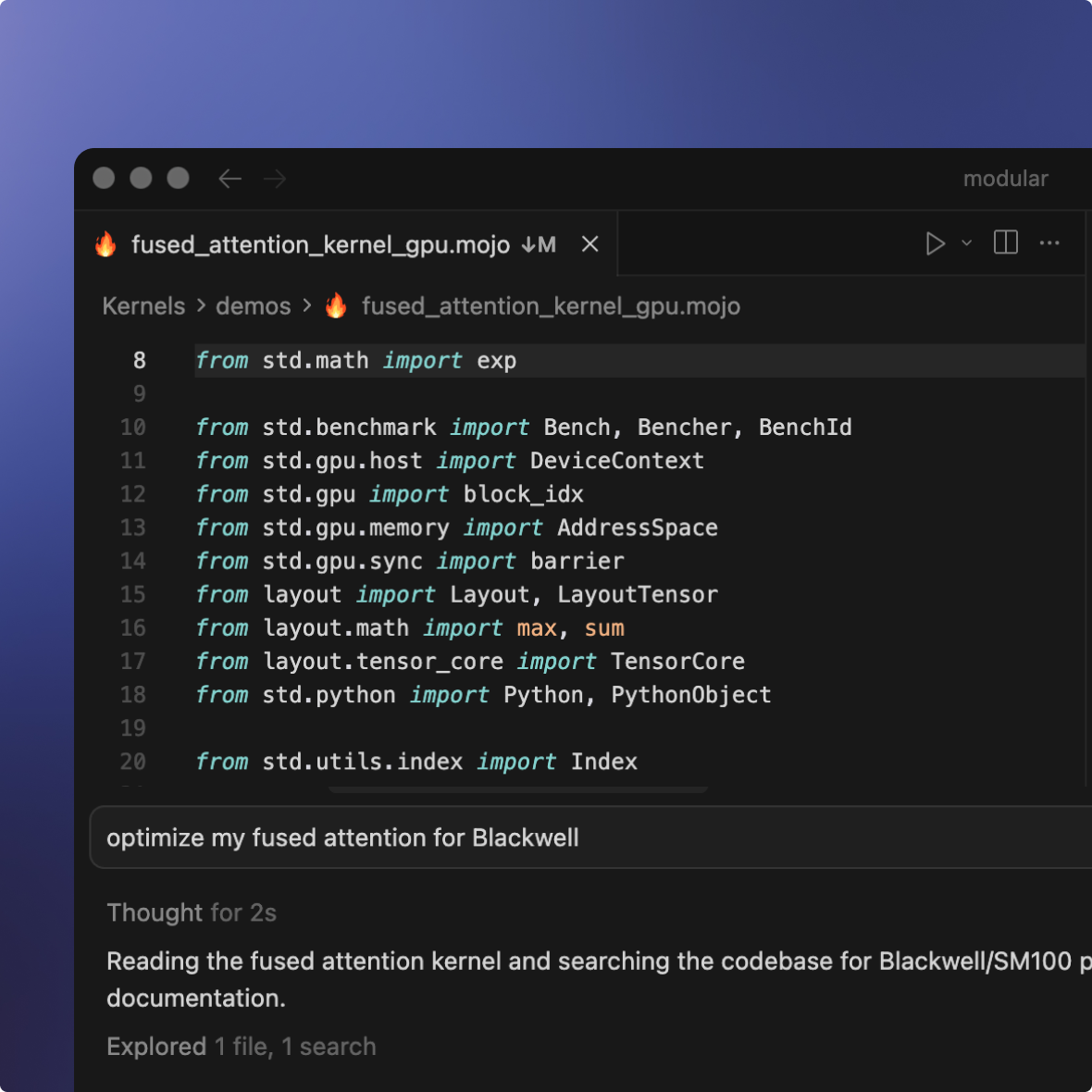

Custom attention and decoding strategies

Building a novel speculative decoding strategy? Sliding-window attention for code-specific patterns? Repository-aware context management? Write it in Mojo with full kernel access. Compile once for any GPU target.

Full-stack programmability in Mojo

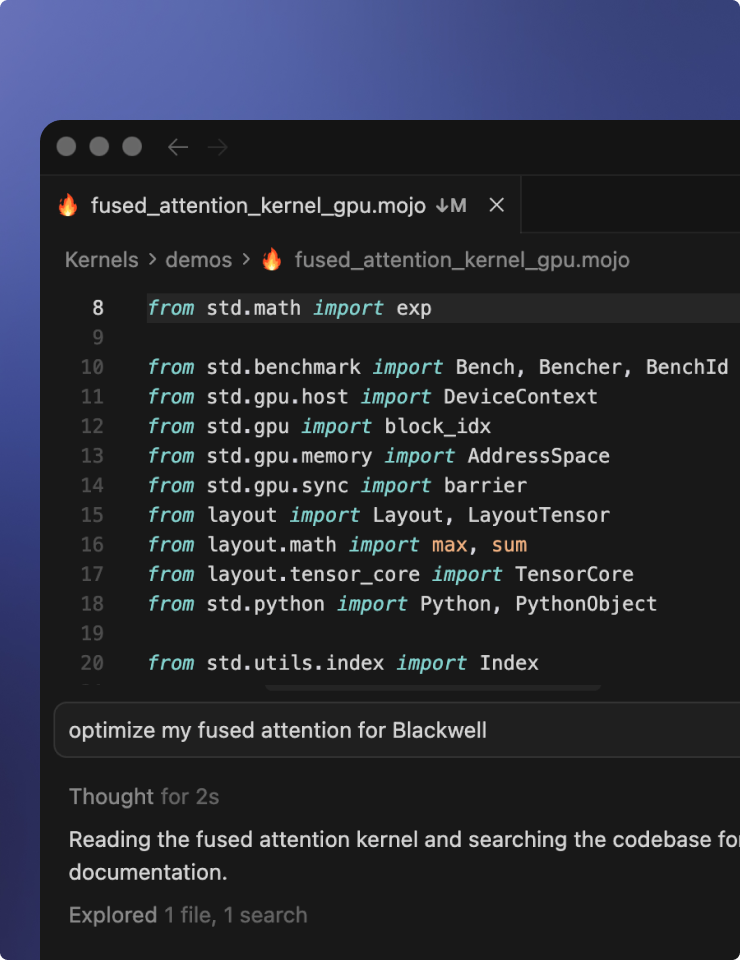

The best code LLMs

Kimi K2.5, DeepSeek V3.2, and every major code-optimized model - pre-optimized and ready to serve out of the box. New code models land in MAX within days of release. Run them on NVIDIA or AMD with zero configuration. Fine-tuned a code model on your proprietary codebase? Deploy it on the same infrastructure with the same compiled performance.

THE LATEST OPEN WEIGHT CODING LLMS. READY FOR YOU.

Production use cases

Real-time autocomplete as developers type. The workload that defines AI coding: sub-200ms TTFT, high throughput during business hours, graceful scaling during off-peak. MAX continuous batching handles traffic spikes without latency degradation. Fireworks serves Cursor at 3X cost savings — Modular adds hardware portability on top.

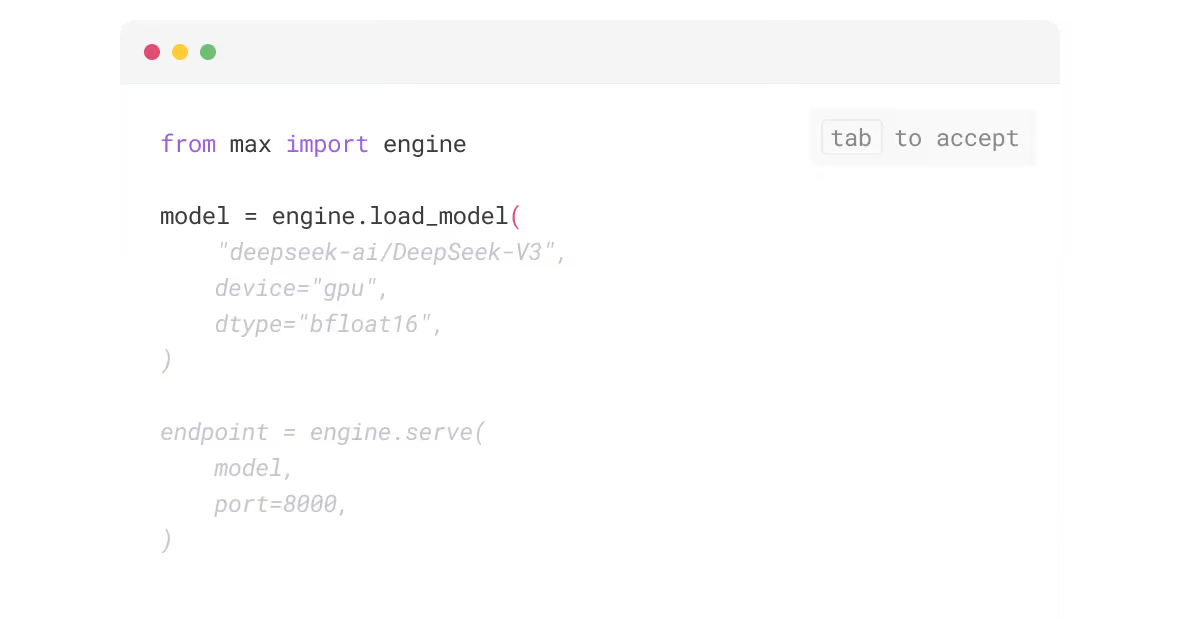

Conversational code assistance with long-context models. Serve 256K+ context windows efficiently with MAX KV-cache optimization. Same model serves chat, completion, and review endpoints from one deployment. Sourcegraph achieved 2.5X higher fix acceptance on Fireworks — Modular delivers the same compiler-level optimization with GPU portability.

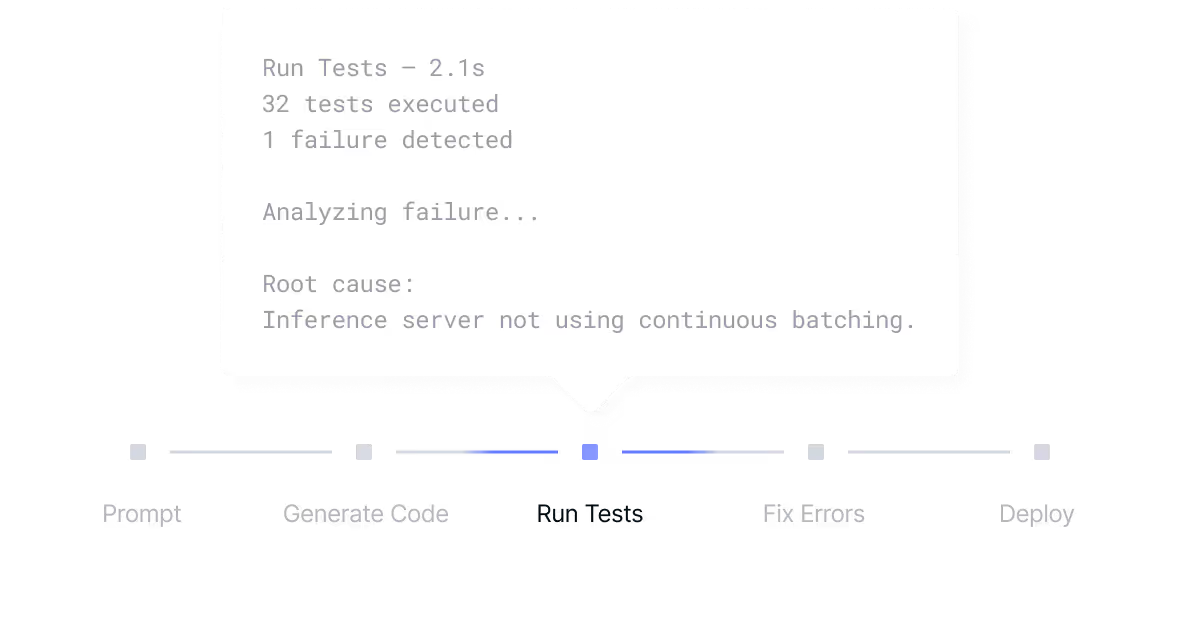

Multi-step code generation, testing, and iteration. AI coding agents (Claude Code, Codex, Devin) require high-throughput batch inference alongside low-latency interactive completions. MAX serves both workload patterns from one deployment — on whichever GPU has the best price-performance.

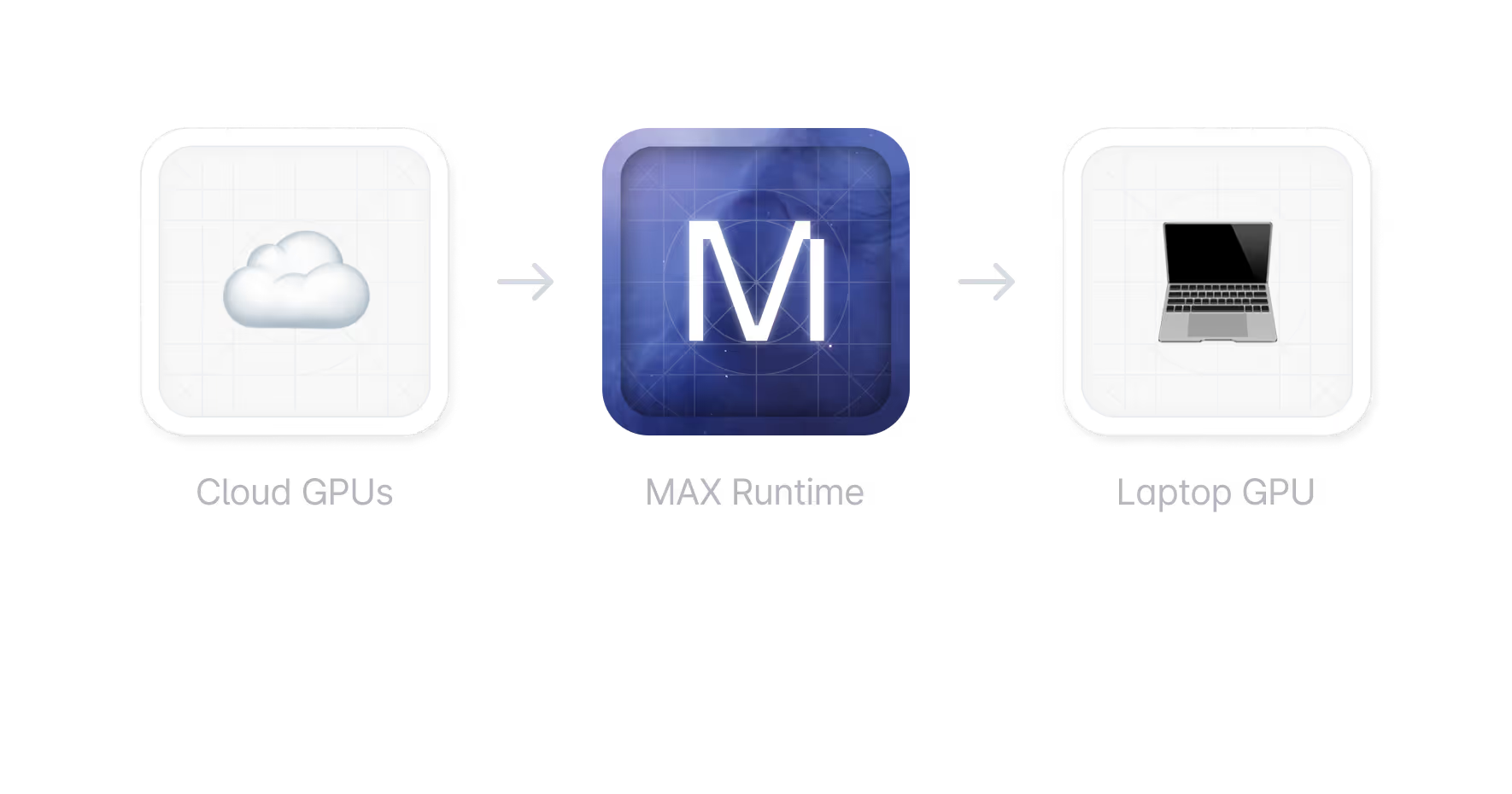

Local code completion on developer laptops using Apple Silicon GPU inference. For air-gapped enterprise environments, classified codebases, or zero-latency offline development. No other inference platform offers a cloud-to-device portability path for coding models.

Modular vs. the competition

At scale, the math is simple. A Cursor-class product serving 1M developers at 10 requests/keystroke makes billions of inference calls per day. The GPU bill is the single largest cost line. Hardware portability isn’t a nice-to-have — it’s the difference between profitable and underwater.

- Hardware Portability

Serve on whichever GPU has the best price-performance this quarter. NVIDIA today, AMD tomorrow, both for resilience. Negotiate from strength.

- Efficient Runtime Footprint

90% smaller runtime = lower storage, bandwidth, and cold start costs across your fleet. At 10,000 GPU instances, this is millions per year.

- Hybrid Cloud + On-Device

On-device inference shifts variable workloads to user hardware. Hybrid cloud + local for cost-efficient burst capacity.

- Cross-GPU Speculative Decoding

Compiler-native speculative decoding across all GPU targets. Same speed optimization, any hardware.

- Alternatives

- Vendor Lock-In

NVIDIA-only. No pricing leverage. When demand spikes, you pay whatever NVIDIA charges.

- Bloated Runtime Footprint

7GB+ runtime per instance. Storage and bandwidth costs compound across every instance in your fleet.

- Cloud-Only Architecture

Cloud-only. Every keystroke hits your GPU fleet. No local offload path. No hybrid architecture.

- Hardware-Specific Optimization

Speculative decoding on NVIDIA only (FireAttention, vLLM). Rewrite required for any other target architecture.

Get started with Modular

Schedule a demo of Modular and explore a custom end-to-end deployment built around your models, hardware, and performance goals.

Distributed, large-scale online inference endpoints

Highest-performance to maximize ROI and latency

Deploy in Modular cloud or your cloud

View all features with a custom demo

Book a demo

Talk with our sales lead Jay!

30min demo. Evaluate with your workloads. Ask us anything.

Book a demo for a personalized walkthrough of Modular in your environment. Learn how teams use it to simplify systems and tune performance at scale.

Custom 30 min walkthrough of our platform

Cover specific model or deployment needs

Flexible pricing to fit your specific needs

Book a demo

Talk with our sales lead Jay!

Run any open source model in 5 minutes, then benchmark it. Scale it to millions yourself (for free!).

Install Mojo and get up and running in minutes. A simple install, familiar tooling, and clear docs make it easy to start writing code immediately.