Human-sounding text-to-speech on any hardware

Production voice synthesis with compiler-optimized latency. Serve TTS models on NVIDIA, AMD, and Apple Silicon with the same codebase. No rewrites. No lock-in.

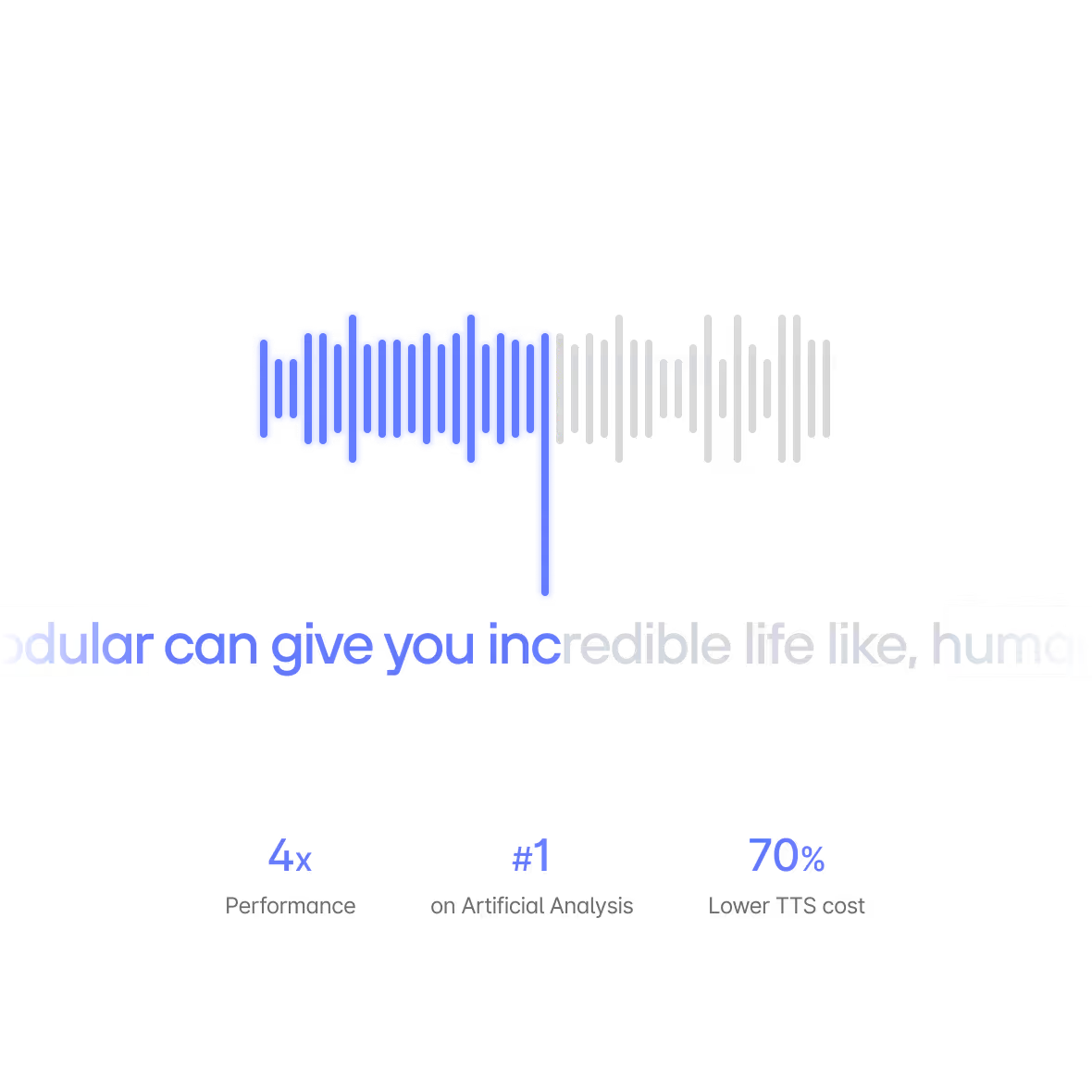

Powering the #1 ranked speech model

“70% lower TTS costs and 4x performance — unlocking both NVIDIA and AMD GPUs for the first time.”

Why run voice synthesis on Modular?

Ultra-low latency voice serving

Voice synthesis demands real-time response - every millisecond of latency is audible. Modular's AI stack fuses the entire inference path into a single compiled unit, eliminating the overhead that makes wrapper-based stacks too slow for live audio. Continuous batching and KV-cache optimization keep throughput high even under concurrent request load.

REAL-TIME AUDIO DEMANDS COMPILED PERFORMANCE

Scale to zero, burst to thousands

Voice traffic is spiky - a product launch, a marketing campaign, a viral moment. Modular Cloud's compiler-aware auto-scaling handles burst without pre-provisioning idle GPUs. Scale to zero when quiet, spin up replicas in seconds when demand hits. Most voice providers charge for idle capacity. You shouldn't.

COMPILER-AWARE AUTO-SCALING. NO IDLE GPU COSTS.

Custom voice model deployment

Bring your proprietary TTS, voice cloning, or speech-to-speech model. Modular Cloud compiles and serves it on dedicated infrastructure with custom Mojo kernels for novel architectures. Your voice is your brand - don't run it on someone else's black box.

YOUR VOICE MODEL. CUSTOM KERNELS. MANAGED INFRA.

Dual GPU vendor support

Run voice models on NVIDIA or AMD from the same container. When AMD MI300X offers better price-performance for your TTS workload, shift without rewriting a line of code. At the token volumes voice synthesis requires, GPU vendor choice is a significant cost lever.

NVIDIA + AMD. SAME MODEL. SAME CONTAINER.

On-device with Apple Silicon

MAX compiles voice models natively for Apple Silicon - M-series Macs, iPads, and beyond. Run TTS inference locally with no network round-trip, no cloud dependency, and no data leaving the device. Ideal for privacy-sensitive applications, offline use cases, and edge deployments where latency to a cloud endpoint is unacceptable.

LOCAL INFERENCE. ZERO LATENCY. ZERO DATA EGRESS.

Built for production voice applications

Real-time conversational voice with sub-100ms latency. Deploy on NVIDIA for throughput or AMD for cost — or both simultaneously for resilience.

Character voice synthesis at scale. Inworld AI serves millions of TTS requests across GPU vendors for real-time game characters.

Modular vs. the competition

- Burst Scaling for Spiky Traffic

Compiler-aware auto-scaling spins up voice replicas in seconds. Scale to zero when quiet. No pre-provisioned idle GPUs burning cost between traffic spikes.

- Hardware Portability

Run TTS on NVIDIA and AMD simultaneously. Shift voice workloads to whichever GPU offers better price-performance. Inworld AI proves this at #1 on Artificial Analysis.

- Compiler-Fused TTS Pipeline

Attention, vocoder, and audio encoding compiled and optimized as a single fused graph through MLIR. Every stage of the voice pipeline is optimized together, not stitched together.

- Custom Voice Model Support

Bring proprietary TTS, voice cloning, or speech-to-speech models. Deploy with custom Mojo kernels for novel audio architectures. Your voice is your brand - own the stack.

- On-Device Voice

Apple Silicon support for on-device voice synthesis. Zero network latency, zero data egress. Run TTS locally for privacy-sensitive and offline use cases.

- Alternatives

- Vendor Lock-In

ElevenLabs, PlayHT, Cartesia are proprietary models on NVIDIA-only infrastructure. Zero GPU vendor choice. Higher costs.

- Runtime Wrappers

PyTorch runtime with no cross-layer optimization. Attention, vocoder, and encoding run as separate unoptimized stages. Higher latency, lower throughput.

- No Custom Model Path

Use their models or nothing. No support for proprietary TTS architectures. No kernel-level programmability for novel audio pipelines.

- Cloud-Only Voice

No on-device deployment path. Every synthesis request requires a network round-trip. No option for local, private, or offline voice.

- Static Capacity

Pay per character regardless of traffic volume, or reserve fixed capacity and pay for idle GPUs. No compiler-aware scaling. No scale-to-zero.

Get started with Modular

Schedule a demo of Modular and explore a custom end-to-end deployment built around your models, hardware, and performance goals.

Distributed, large-scale online inference endpoints

Highest-performance to maximize ROI and latency

Deploy in Modular cloud or your cloud

View all features with a custom demo

Book a demo

Talk with our sales lead Jay!

30min demo. Evaluate with your workloads. Ask us anything.

Book a demo for a personalized walkthrough of Modular in your environment. Learn how teams use it to simplify systems and tune performance at scale.

Custom 30 min walkthrough of our platform

Cover specific model or deployment needs

Flexible pricing to fit your specific needs

Book a demo

Talk with our sales lead Jay!

Run any open source model in 5 minutes, then benchmark it. Scale it to millions yourself (for free!).

Install Mojo and get up and running in minutes. A simple install, familiar tooling, and clear docs make it easy to start writing code immediately.