Your model, your kernels, on any hardware.

Modular is the only inference platform where you can write custom model architectures, and deploy them across NVIDIA, AMD, CPUs & more - all from a single codebase. No vendor lock-in & no rewrites. We provide forward deploy engineers to help, in our cloud or yours.

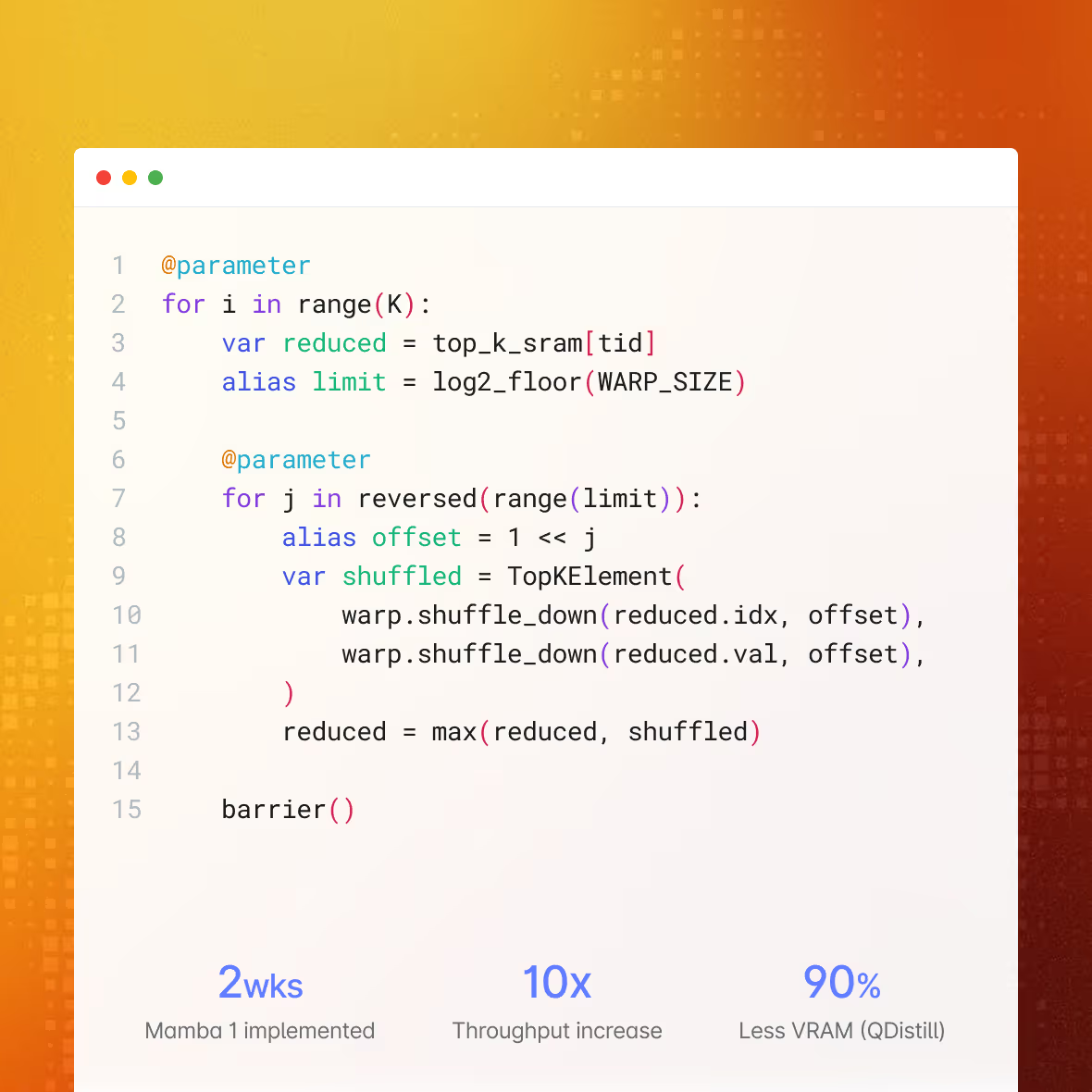

How Qwerky AI built a new model paradigm on MAX — in 2 weeks

“Traditional frameworks proved inefficient for our custom Mamba-based architectures. We could write CUDA kernels, but maintaining separate codebases for every vendor’s accelerator stack doesn’t make sense when you can write it once in Mojo.”

Why Modular for custom models?

Write once in MAX, compile to any target

Build your inference pipeline once using MAX's Python-based APIs. The MLIR compiler handles the rest - generating optimized code for NVIDIA, AMD, Apple Silicon, and ARM CPUs from a single source. No vendor-specific rewrites. No maintaining parallel codebases. When new hardware ships, recompile and deploy.

ONE CODEBASE. NVIDIA, AMD, APPLE SILICON, ARM.

Custom model architectures

Running a proprietary transformer variant, a state-space model, or something entirely novel? MAX's PyTorch-like model APIs let you define custom architectures and compile them for any supported hardware. Port existing models in minutes, not months - something no other inference platform can offer.

PORT CUSTOM MODELS IN MINUTES, NOT MONTHS

Custom cache architectures

Standard KV-cache doesn't fit every model. MAX gives you full control over cache design - implement sliding window, multi-query, grouped-query, or entirely custom caching strategies. Write them in Mojo for maximum performance, and they'll compile across hardware targets automatically.

FULL CACHE CONTROL FROM ATTENTION TO ACCELERATOR

CPU+GPU kernels for cloud, edge and development

The same kernel code runs on cloud GPUs, edge devices, and your local machine. Develop and test on a MacBook with Apple Silicon, deploy to AMD MI355 in the data center, scale on NVIDIA B200s in the cloud. One set of kernels. Every environment.

ONE KERNEL CODEBASE, EVERY DEPLOYMENT TARGET

Validated against reference implementations

Every kernel and model optimization is tested against reference implementations for numerical accuracy. No silent quality degradation from aggressive quantization or untested fusion passes. You get the performance gains with confidence that outputs match expectations.

PERFORMANCE WITHOUT ACCURACY TRADEOFFS

Iterate in hours, not weeks

Mojo's Python-compatible syntax means your ML team can write and modify GPU kernels without learning CUDA or HIP. Combined with MAX's fast compilation and a <700MB runtime, the loop from idea to deployed kernel shrinks from weeks of systems engineering to hours of focused work.

FROM IDEA TO DEPLOYED KERNEL IN HOURS

Production serving infrastructure

Continuous batching, KV-cache optimization, speculative decoding, auto-hardware detection, and OpenAI-compatible API endpoints - all compiled into a single container. No assembly required.

SINGLE MODEL. ONE CLICK. DEPLOY.

Custom models on Modular today

Full SSM stack: selective scan, causal conv1d, recurrent state caching. Mamba 1 shipping. Mamba 2 and Gated DeltaNet in development. Purpose-built kernels validated against the original authors’ implementations.

Sliding window, sparse, linear, cross-attention with custom routing. Combine transformers + SSMs + custom layers in a single model graph. MAX compiles the full hybrid graph as one unit — fusing across architectural boundaries.

Custom quantization beyond standard int4/int8. Mojo gives bit-level control over weight representation and mixed-precision execution. Custom MoE gating networks and expert selection strategies compiled for any GPU.

Who builds custom models on Modular?

AI-native companies with proprietary architectures

If your moat is a custom model - a novel attention mechanism, a proprietary SSM, a hybrid architecture - you need an inference platform with graph programmability kernel-level programmability. MAX provides the framework, and Mojo lets you write custom ops that compile for any hardware target. No other platform offers this.

EX: QWERKY AI - CUSTOM SSM ARCHITECTURES ON NVIDIA + AMD

Research labs going from paper to production

You published the paper. Now ship it. MAX's PyTorch-like APIs and Mojo's Python-compatible syntax mean your research team can go from prototype to production-grade serving without handing off to a separate systems engineering team. Same people, same code, real traffic.

EX: NOVEL ARCHITECTURES SERVING PRODUCTION TRAFFIC IN DAYS

Teams escaping hardware lock-in

Your CUDA kernels work - but only on NVIDIA. Every hardware generation means another rewrite. Mojo gives you the same low-level control with portability built in. Write it once, compile to NVIDIA, AMD, and Apple Silicon. Stop rewriting kernels every time you change GPUs.

EX: ONE SET OF KERNELS REPLACING SEPARATE CUDA AND HIP CODEBASES

Enterprise with compliance constraints

Custom models in regulated industries need inference that runs on your terms - air-gapped, on-prem, in your VPC. MAX ships as a single container under 700MB with zero external dependencies. Full data control, any hardware, no call-home.

EX: HIPAA, FEDRAMP, ITAR, AIR-GAPPED ENVIRONMENTS

Compare deployment options

Self-Hosted | Our Cloud | Your Cloud | |

|---|---|---|---|

Support | Active community and fast responses in Discord, Discourse, Github | Dedicated support, engineering team, standard and custom SLAs/SLOs | Dedicated support, engineering team, standard and custom SLAs/SLOs |

Models | Hundreds of models in our model repo, view top performers | Top performers available for dedicated endpoint, custom model deployment | Top performers available for dedicated endpoint, custom model deployment |

AI Skills | Use our open AI skills to easily write models, or optimize code | Our engineers can help train your team & migrate your workloads | Our engineers can help train your team & migrate your workloads |

Platform access | Deploy MAX and Mojo yourself anywhere you want. Build with open source | Access Modular Platform with a console for deploying, scaling and managing your AI endpoints. | Access Modular Platform with a console for deploying, scaling and managing your AI endpoints. |

Scalability | Scale on your own with the MAX container | Auto-scaling, scale to zero, burst capacity | Auto-scaling, proven at Fortune 500 scale. |

Deployment location | Self-deployed, anywhere | Our cloud | Your cloud or hybrid |

Compute hardware | NVIDIA, AMD, and Apple Silicon & more. Scaling restrictions apply. | NVIDIA & AMD GPUs in our cloud. More hardware coming soon. | NVIDIA & AMD GPUs, Intel, AMD & ARM CPUs - deployed in your cloud. |

Custom kernels | Your engineers write custom kernels for your workloads. | Modular engineers tune kernels for your workloads | Modular engineers write custom kernels for your workloads |

Forward Deployed Engineers | Available with support plan | Included | Included; working in your environment |

Security & Compliance | SOC 2 Type 2 certified | SOC 2 Type 2 certified | SOC 2 Type 2 certified |

Billing & Pricing | Free | Per token (shared) Per minute (dedicated) | Per minute deployed. Use your AWS/GCP/Azure credits and commits |

Enterprise Contract |

Get started with Modular

Schedule a demo of Modular and explore a custom end-to-end deployment built around your models, hardware, and performance goals.

Distributed, large-scale online inference endpoints

Highest-performance to maximize ROI and latency

Deploy in Modular cloud or your cloud

View all features with a custom demo

Book a demo

Talk with our sales lead Jay!

30min demo. Evaluate with your workloads. Ask us anything.

Book a demo for a personalized walkthrough of Modular in your environment. Learn how teams use it to simplify systems and tune performance at scale.

Custom 30 min walkthrough of our platform

Cover specific model or deployment needs

Flexible pricing to fit your specific needs

Book a demo

Talk with our sales lead Jay!

Run any open source model in 5 minutes, then benchmark it. Scale it to millions yourself (for free!).

Install Mojo and get up and running in minutes. A simple install, familiar tooling, and clear docs make it easy to start writing code immediately.