In Part 1, we introduced the structured kernel architecture and made the case for why it exists. Conventional GPU kernels (where pipeline coordination, data movement, and compute interleave across thousands of lines) are difficult to reason about, maintain, and extend. Adding block-scaled quantization to a conventional kernel means writing a new kernel. Adding support for a new hardware feature means touching code that has nothing to do with that feature. Structured Mojo Kernels address this by separating concerns into three components with defined interfaces, and we showed the result: 48% less code, identical performance, and a conv2d kernel that reused the entire matmul infrastructure with only ~130 lines of conv-specific code.

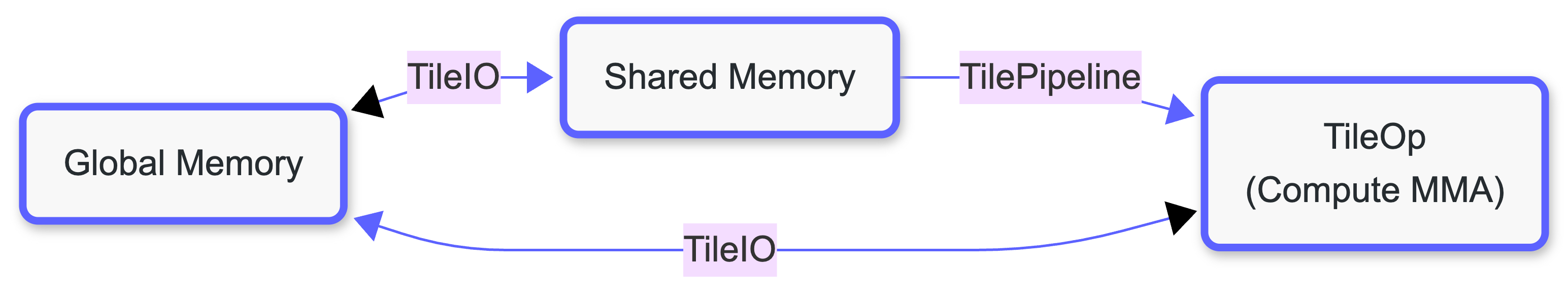

This post explains the components of Structured Mojo Kernels: TileIO, TilePipeline, and TileOp. Each component forms a node in a kernel execution pipeline, and the links between them create a logical separation of concerns that makes kernels easier to extend and update. That organization matters because GPU kernels don't stay static. By abstracting hardware optimized implementations into patterns, the same kernel structure can adapt across NVIDIA and AMD hardware generations with minimal rewrite.

The Data Flow Model

A structured matmul kernel moves data through three distinct memory levels: global memory (DRAM), shared memory (SMEM/LDS), and registers or tensor memory. The three components form nodes in a data flow and are responsible for the different stages of execution. TileIO moves tiles from DRAM to shared memory. TilePipeline signals when tiles are ready to consume or free to refill. TileOp reads from shared memory, runs MMA instructions, and writes accumulators. This separation of concerns between the different components allows one to reason about each component in isolation.

TileIO: Data Movement Abstraction

TileIO handles all data movement between memory hierarchies. Its responsibility is to hide the complexity of platform-specific DMA operations behind a clean interface.

What TileIO Encapsulates

| Concern | NVIDIA | AMD | Encapsulated By |

|---|---|---|---|

| DMA operation | TMA async_copy | load_to_lds | load() |

| Address calculation | TMA descriptors | Buffer resources | Constructor |

| Layout transformation | TMA swizzle modes | Manual swizzle | Configuration |

| Masking/bounds | TMA handles | Manual checks | load() |

NVIDIA: TileLoaderTMA

On NVIDIA Blackwell, TileIO wraps TMA (Tensor Memory Accelerator, explained in a previous blog post) operations. The loader stores a pointer to the TMA descriptor and a multicast mask for cluster distribution:

The loader is deliberately minimal; it wraps one TMA descriptor and one multicast mask to expose a data movement primitive. The kernel manages all orchestration (k-group iteration, barrier management, expected byte counts), and interacts with the loader through the stage readiness/consumption protocol exposed by TilePipeline, which we'll discuss in "TilePipeline: The Pipeline Coordinator".

AMD: TileLoaderLDS

On AMD, TileIO uses buffer resources for cooperative global-to-LDS loading. The architecture requires a fundamentally different loading strategy:

Despite the completely different mechanisms, the abstraction allows users of the API to avoid the hardware details, and the call site for both looks the same.

TilePipeline: The Pipeline Coordinator

TilePipeline manages the producer-consumer pipeline that overlaps loading, computing, and storing. It does not move data and does not run MMA instructions, instead it just signals when tiles are full or empty.

NVIDIA: InputTilePipeline

On NVIDIA Blackwell, TilePipeline uses hardware mbarrier primitives. mbarrier provides hardware-accelerated arrive/wait, automatic byte counting that integrates with TMA, and phase tracking across pipeline stages. Parameterizing the pipeline by payload type means the same synchronization logic works for both standard and block-scaled tile configurations; only the payload differs:

The InputProducer and InputConsumer role handles provide acquire() methods that return context managers. The with producer.acquire() as tiles: pattern automatically handles barrier waits on entry and stage advancement on exit.

AMD: Atomic Counter Synchronization

AMD lacks the mbarrier operation. As a consequence, kernels on AMD cannot offload barrier byte-counting to hardware, instead all synchronization is explicit. The design uses a SyncStrategy trait to make the ring buffer logic independent of the synchronization mechanism:

Two concrete implementations trade off simplicity for reduced contention:

The underlying synchronization primitives are worth examining directly:

Two non-obvious decisions here. First, only the first thread in each warp increments the counter. All threads in a warp execute in lockstep, so having all threads in a warp (64 on AMD) issue fetch_add would produce 64 increments where one is correct. Second, s_sleep 0 yields the warp scheduler rather than burning compute cycles in a tight spin. On AMD, issuing a zero-cycle sleep hint lets other wavefronts make progress while this one waits.

Despite the completely different primitives, both NVIDIA and AMD TilePipeline expose the same acquire/release interface. The ring buffer logic above it is platform-agnostic.

TileOp: The Compute Driver

TileOp encapsulates all matrix computation: loading operands from shared memory to registers (or TMEM), executing MMA/mfma instructions, and managing accumulator storage.

NVIDIA Blackwell: TMEM-Based Accumulator

Blackwell introduces Tensor Memory (TMEM, explained in a previous blog post), a 256KB per-SM scratchpad dedicated to accumulator storage. This matters because moving accumulators out of the register file frees it for other uses and enables larger tile sizes. TileOp manages the full TMEM lifecycle through a context manager:

The context manager matters beyond tidiness. TMEM must be deallocated only after the epilogue warp has finished reading accumulators. If the MMA warp deallocates early, the epilogue reads garbage. If it never deallocates, the next kernel invocation fails to allocate.

As part of the pipeline, the MMA operation itself receives tiles from the pipeline and executes tcgen05.mma instructions, writing results to TMEM accumulators.

AMD MI300X: Register-Based Accumulator

On AMD, there is no dedicated tensor memory. Accumulators live in VGPRs alongside operands, and the compiler manages their allocation. As a result, we define the TileOperator as:

The Key Difference

| Aspect | NVIDIA Blackwell | AMD MI300X |

|---|---|---|

| Accumulator storage | Tensor Memory (256KB) | Registers (VGPRs) |

| Instruction | tcgen05.mma | mfma |

| Operand source | Direct from SMEM | Must load to registers first |

| Allocation | Explicit allocate/deallocate | Compiler-managed |

Both TileOp implementations present the same conceptual interface (load fragments, compute MMA, store results) but the resource lifecycle is structurally different. NVIDIA's context manager requirement is a direct consequence of explicit TMEM allocation. AMD needs no such wrapper because the compiler handles register lifetimes automatically.

TileTensor: The Tensor Datastructure

All three components share a common foundation: TileTensor. This abstraction carries compile-time layout information (shape, stride, swizzle patterns), memory address space placement (global memory, shared memory, registers), and type-safe access patterns. TileTensor is what allows TileIO, TilePipeline, and TileOp to hand tiles to each other without any component knowing the other's internal representation.

Example: Thread-to-Element Mapping

Putting Everything Together: A Complete Pipeline

Here's how the three components interact in the actual Blackwell structured matmul kernel. Each warp role has one responsibility, and the pipeline structure is explicit in the code.

Setup: shared memory and pipeline initialization

TMA Load Warp: data movement only

The load warp acquires producer stages, issues TMA loads, and signals completion. It does not touch accumulators or barriers beyond what TilePipeline exposes.

MMA Warp: compute only

The MMA warp waits on the input pipeline, computes, and signals the output pipeline. mma_ctx owns the TMEM allocation for the lifetime of this block: entering the context signals the epilogue that accumulators are allocated, and exiting the context deallocates only after the epilogue signals it has finished reading.

Epilogue Warp: output only

The epilogue warp reads from TMEM through the output pipeline and writes to global memory. It signals the MMA warp when it is done, triggering TMEM deallocation.

What’s Next

We've now seen each pillar from the inside: TileIO moves data, TilePipeline coordinates timing, TileOp does the compute. In Part 3, we will explore the practical benefit of this modular design. We take two real kernel families, conv2d and block-scaled matmul, and trace exactly how they are built around the matmul foundation.

TL;DR

- TileIO owns data movement: hides TMA vs. buffer load differences behind a single

load()call - TilePipeline owns coordination:

mbarrieron NVIDIA, atomic counters on AMD, same acquire/release interface - TileOp owns computation: TMEM on NVIDIA (with explicit RAII lifecycle), registers on AMD (compiler-managed)

TileTensoris the shared data abstraction: one type across all memory levels and address spaces- Component boundaries are enforced by types, not convention: the load warp cannot issue MMA instructions because TilePipeline does not expose that interface

.png)