Blog

Democratizing AI Compute Series

Go behind the scenes of the AI industry with Chris Lattner

Develop locally, deploy globally

The recent surge in AI application development can be attributed to several factors: (1) advancements in machine learning algorithms that unlock previously intractable use cases, (2) the exponential growth in computational power enabling the training of ever-more complex models, and (3) the ubiquitous availability of vast datasets required to fuel these algorithms. However, as AI projects become increasingly pervasive, effective development paradigms, like those commonly found in traditional software development, remain elusive.

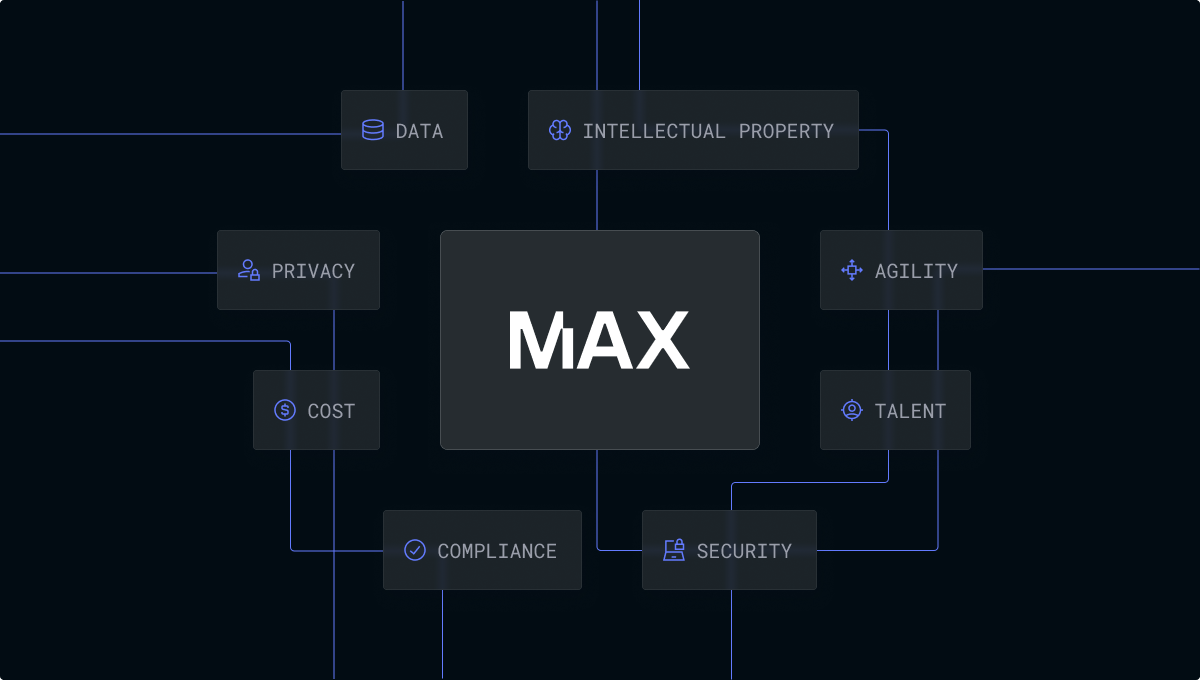

Take control of your AI

In today’s rapidly evolving technology landscape, adopting and rolling out AI to enhance your enterprise is critical to improving your organization’s productivity and ensuring that you are delivering a world-class product and service experience to your customers. AI is without question, the single most important technological revolution of our time—representing a new technology super-cycle that your enterprise cannot be left behind on.

Bring your own PyTorch model

The adoption of AI by enterprises has surged significantly over the last couple years, particularly with the advent of Generative AI (GenAI) and Large Language Models (LLMs). Most enterprises start by prototyping and building proof-of-concept products (POCs), using all-in-one API endpoints provided by big tech companies like OpenAI and Google, among others. However, as these companies transition to full-scale production, many are looking for ways to control their AI infrastructure. This requires the ability to effectively manage and deploy PyTorch.

MAX 24.4 - Introducing quantization APIs and MAX on macOS

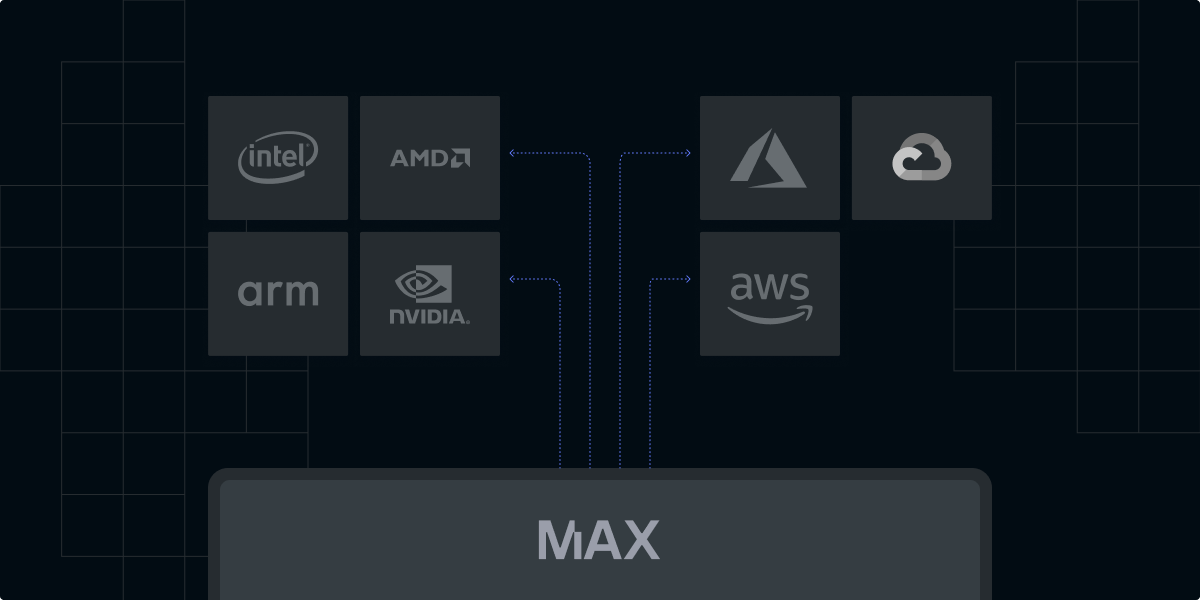

Today, we're thrilled to announce the release of MAX 24.4, which introduces a powerful new quantization API for MAX Graphs and extends MAX’s reach to macOS. Together, these unlock a new industry standard paradigm where developers can leverage a single toolchain to build Generative AI pipelines locally and seamlessly deploy them to the cloud, all with industry-leading performance. Leveraging the Quantization API reduces the latency and memory cost of Generative AI pipelines by up to 8x on desktop architectures like macOS, and up to 7x on cloud CPU architectures like Intel and Graviton, without requiring developers to rewrite models or update any application code.

Announcing MAX Developer Edition Preview

Modular was founded on the vision to enable AI to be used by anyone, anywhere. We have always believed that to achieve this vision, we must first fix the fragmented and disjoint infrastructure upon which AI is built today. As we said 2 years ago, we imagine a different future for AI software, one that rings truer now than ever before

Sign up for our newsletter

Get all our latest news, announcements and updates delivered directly to your inbox. Unsubscribe at anytime.

Thank you for your submission.

Your report has been received and is being reviewed by the Sales team. A member from our team will reach out to you shortly.

Thank you,

Modular Sales Team

Start building with Modular

Quick start resources

Get started guide

With just a few commands, you can install MAX as a conda package and deploy a GenAI model on a local endpoint.

Browse open source models

500+ supported models, most of which have been optimized for lightning fast speed on the Modular platform.

Find examples

Follow step by step recipes to build Agents, chatbots, and more with MAX.