This edition captures everything happening across the Modular ecosystem, from developers building with MAX and Mojo🔥 to the broader impact Modular is having across AI infrastructure. Here's a look at what's been happening lately.

Community Innovations

The Modular community continues to impress. From practical tooling to GPU experiments in unexpected domains, here's what developers have been creating with MAX and Mojo:

- ArgMojo: Yuhao Zhu built a full-featured CLI argument parser for Mojo, inspired by Python's argparse, Rust's clap, and Go's cobra. It supports long/short options, flag merging, negatable flags, mutually exclusive groups, and auto-generated

-help/-version. Install viapixi add argmojo. - EmberJSON: Lazy Parsing for Arbitrary Structs: Brian Grenier took EmberJSON further using Mojo's new type reflection utilities to add structured de/serialization and lazy parsing. Users can now defer parsing individual fields (or an entire struct) until the value is actually needed.

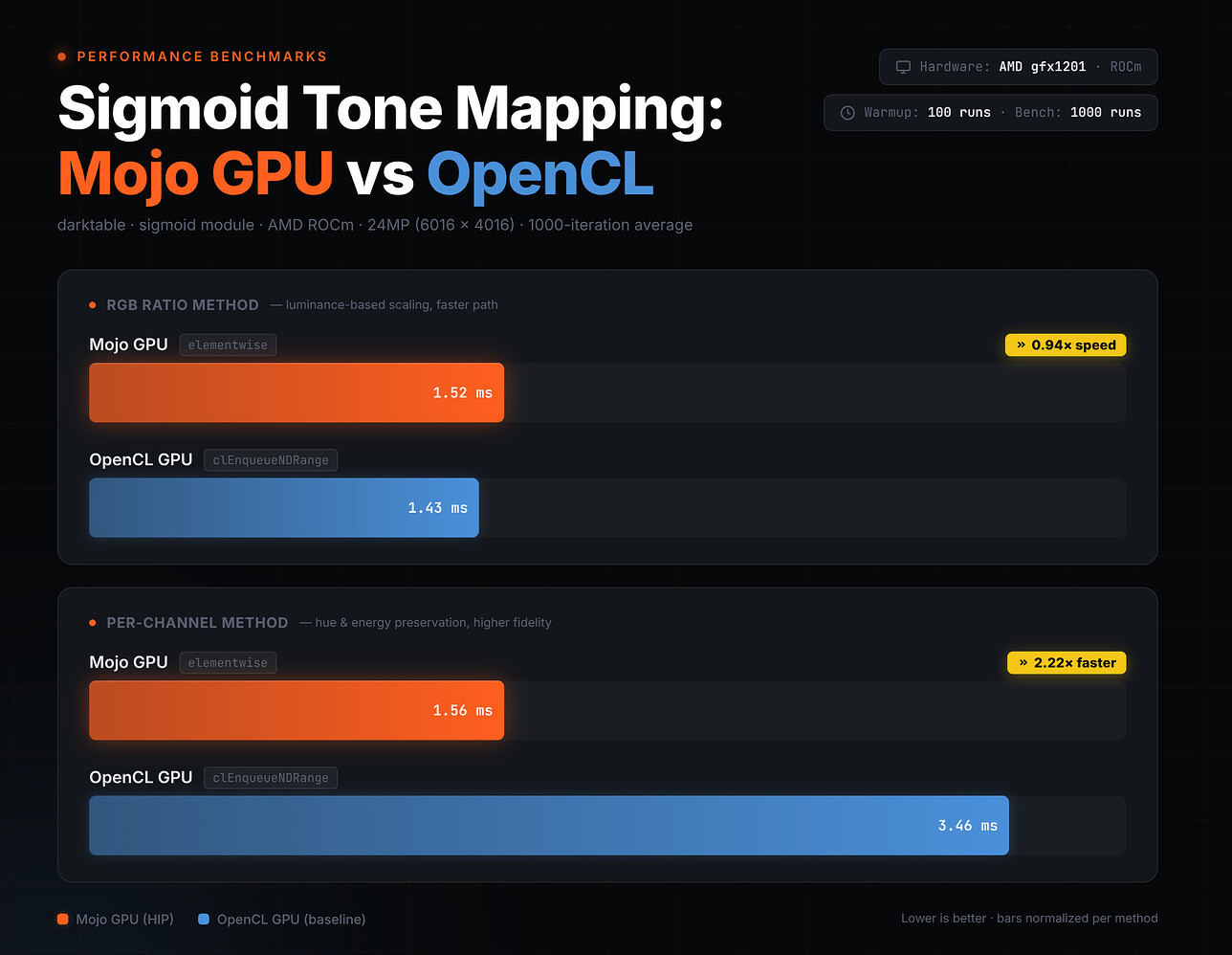

- Mojo GPU Kernels in Digital Photo Editing: Max Chistokletov explored replacing Darktable's OpenCL image processing kernels with Mojo GPU kernels. For the more compute-intensive per-channel sigmoid tonemapping operation, his Mojo implementation ran 2.2x faster than OpenCL on a Radeon RX 9070 (RDNA4), a compelling look at where Mojo can shine outside of AI inference.

- MojoR: A "Numba" for R: Statistics PhD Seyoon Ko is building a JIT compiler that transpiles standard R code into Mojo kernels for CPU/GPU execution, without leaving the R session. Early benchmarks on a bivariate Gibbs Sampler show ~117x speedup over GNU R (0.16s vs. 19.28s).

- Mist: ANSI-Friendly Terminal Toolkit: Mikhail Tavarez refactored

mistinto a comprehensive terminal manipulation library for Mojo, covering text styling, ANSI-aware transformations, terminal control (alternate screen buffer, mouse capture, cursor manipulation), and event parsing. New TUI examples including a snake game are available via the companionbanjorepo. - Floki: Requests-like HTTP Client: Also from Mikhail Tavarez, Floki is an HTTP client for Mojo with an API modeled after Python's

requestspackage, powered by libcurl. - Decimo v0.8.0 (formerly DeciMojo): Yuhao Zhu shipped a major milestone release focused on performance. Highlights include a brand-new base-2^32

BigInttype with Karatsuba multiplication and Burnikel-Ziegler division, Toom-Cook 3-way multiplication forBigUInt, and significantly improvedsqrt,ln, andexpimplementations forBigDecimal. Install viapixi add decimo. - NuMojo v0.8.0: Photon's NumPy-like library for Mojo got a big update: Python-style complex number literals (

1j), initial matrix views, explicit copy semantics aligned with Mojo's ownership model, improved NumPy-compatible slicing with full negative indexing, and a Pixi-based build backend. A major step toward v1.0. - Mojo-GTK: Autogenerated GTK Bindings: Hammad Ali wrote Python scripts that autogenerate GTK bindings for Mojo, covering most of GTK's widgets and functionality. Tested on macOS and Ubuntu, with an OpenGL demo included.

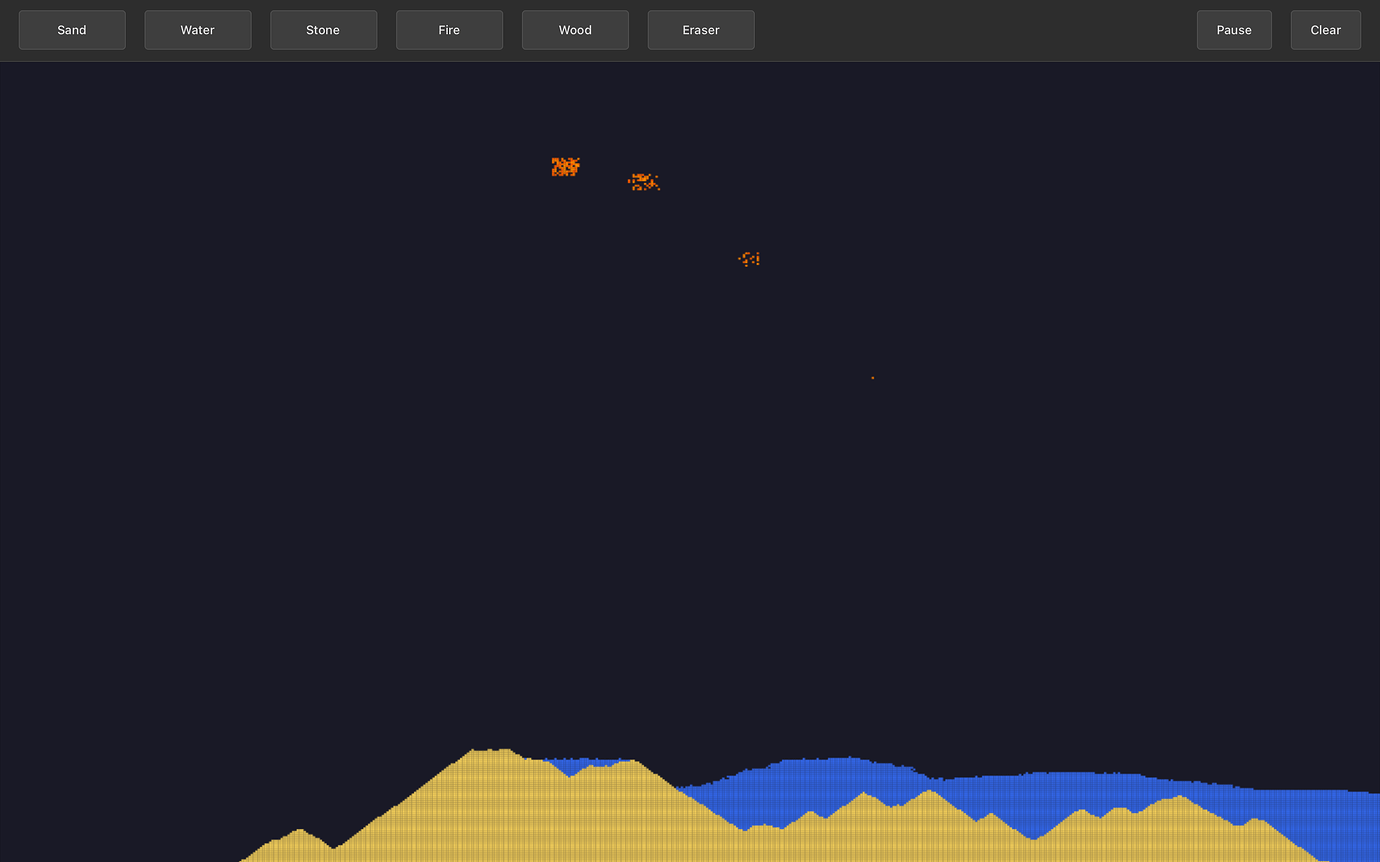

- Powder Simulation: To put those GTK bindings through their paces, Hammad also built a falling-sand-style powder simulation in Mojo using cairo and GTK, featuring sand, water, fire, stone, and wood. A fun demo of what's possible with Mojo GUI development.

- mojo-marimo: Run Mojo in Marimo Notebooks: Michael Booth released a library offering three patterns for running Mojo inside interactive Python notebooks via marimo: a decorator-based API, a dynamic string executor, and compiled

.soextension modules for near-zero call overhead. Now available on PyPI aspy-run-mojo.

💡 We love seeing what you create! Share your MAX or Mojo projects in the forum under the Community Showcase category so we can highlight them in the next edition.

Modular Making Waves

Modular continues to make an impact across the AI and developer space. Here's a roundup of what's been happening:

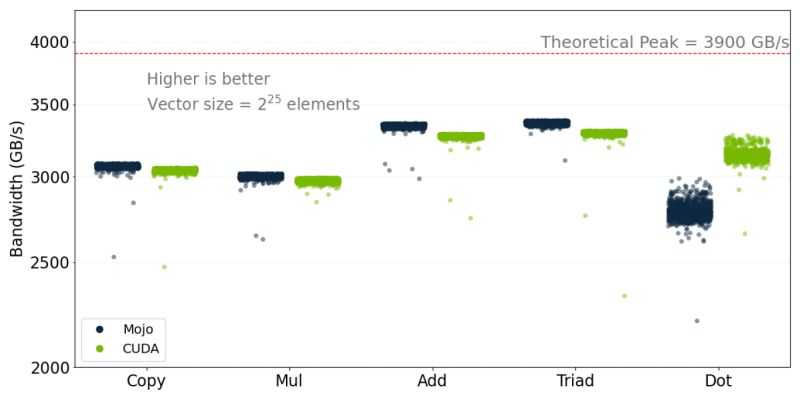

- Researchers from Oak Ridge National Laboratory (ORNL) and the University of Tennessee, Knoxville published a peer-reviewed paper at SC '25 evaluating Mojo for performance-portable scientific computing on GPUs. Their results show Mojo is competitive with CUDA and HIP for memory-bound science kernels across NVIDIA H100 and AMD MI300A GPUs, and the paper took Best Paper at the WACCPD 2025 workshop. Read the paper. She also presented this at a recent community meeting and broke down what the results mean for Mojo. Watch the recording.

- Community contributor Maxim Zaks wrote a great post on When "Magic" Becomes Explicit, exploring how Mojo's compile-time reflection and default trait method implementations let any library author build the kind of automatic conformance synthesis that Swift keeps locked behind compiler magic. He also presented at CODAI 2026, proposing Mojo as the answer to the multi-platform AI compute problem, walking through compile-time generics, cross-GPU dispatch, and where MAX fits in. Watch the talk.

- QWERKY AI published a detailed technical deep-dive on how their team built first-class state space model (SSM/Mamba) support into the MAX framework in two weeks, including what may be the first-ever CPU-only Selective Scan and causal conv1d kernels and a new SSM cache layer. A great read on what it's like extending MAX to support a fundamentally different model architecture.

- Mojo in Jupyter is now a thing. fast.ai co-founder Jeremy Howard released mojokernel, a Jupyter kernel that lets you run Mojo directly in notebooks with full variable persistence across cells. Works on macOS, supports recent Linux versions, and installs via pip or uv. Give it a try!

- TensorWave published a blog post on running real AI workloads (inference, training, and scaling) on AMD GPUs, featuring the Modular platform as part of their stack.

- Business Insider covered how Modular is making it possible to run AI across any chip without rewriting code, and what that means for the future of AI infrastructure. Check it out here.

- Chris Lattner joined Scott Hanselman on the Hanselminutes podcast to talk about Mojo and why today's AI infrastructure demands new abstractions. They cover the full arc from LLVM and Swift to Mojo, digging into heterogeneous compute, memory ownership, and what it means to give developers precise control over how AI workloads hit silicon.

Open Source Contributions

If you've recently had your first PR merged, message Inaara Walji in the forum to claim your Modular swag! Check out the recently merged contributions from our amazing community members:

- aalexmmaldonado [1] [2]

- bgreni [1] [2]

- BlackWingedKing [1]

- bmerkle [1]

- christoph-schlumpf [1]

- izo0x90 [1]

- jaidmin [1]

- jmikaelr [1]

- josiahls [1]

- jrinder42 [1]

- KrxGu [1] [2] [3] [4] [5]

- magi8101 [1] [2]

- martinvuyk [1] [2] [3] [4] [5]

- msaelices [1] [2] [3] [4] [5]

- RossCampbellDev [1]

- RWayne93 [1] [2]

- saviorand [1]

- sbrunk [1]

- sdgunaa [1]

- SirishaDuba [1]

- soraros [1]

- turakz [1] [2] [3] [4]

- YeonguChoe [1]

- YichengDWu [1] [2] [3]

Modular News & Events: Stay Connected

- Modular is heading to NVIDIA GTC 2026! Find us at Booth #3004, March 16-19 in San Jose. Get a first look at Modular Cloud, now in early access, with DeepSeek V3.1 serving live. Plus live Mojo 🔥 GPU programming on NVIDIA Blackwell, the latest AI models in MAX, and AI-assisted kernel development. All powered by Mojo 🔥 and MAX, a simpler way to hit SOTA performance across heterogeneous hardware. Come see the demos, meet the team, and grab some swag 🙌 Follow along for updates: https://luma.com/gtc-modular

- Join us for our next community meeting on March 23rd at 10am PT via Zoom, featuring exciting community projects, a deep dive into Variadic Metaprogramming in Mojo, and an overview of our latest release 👀

- Missed our February community meeting? Hammad Ali showed off his auto-generated GTK bindings for Mojo, Tatiana Melnichenko from Oak Ridge National Laboratory shared her award-winning GPU performance research, and Brad Larson walked through the 26.1 release highlights including new Mojo language features and Apple Silicon GPU support. Catch the recap here.