Product

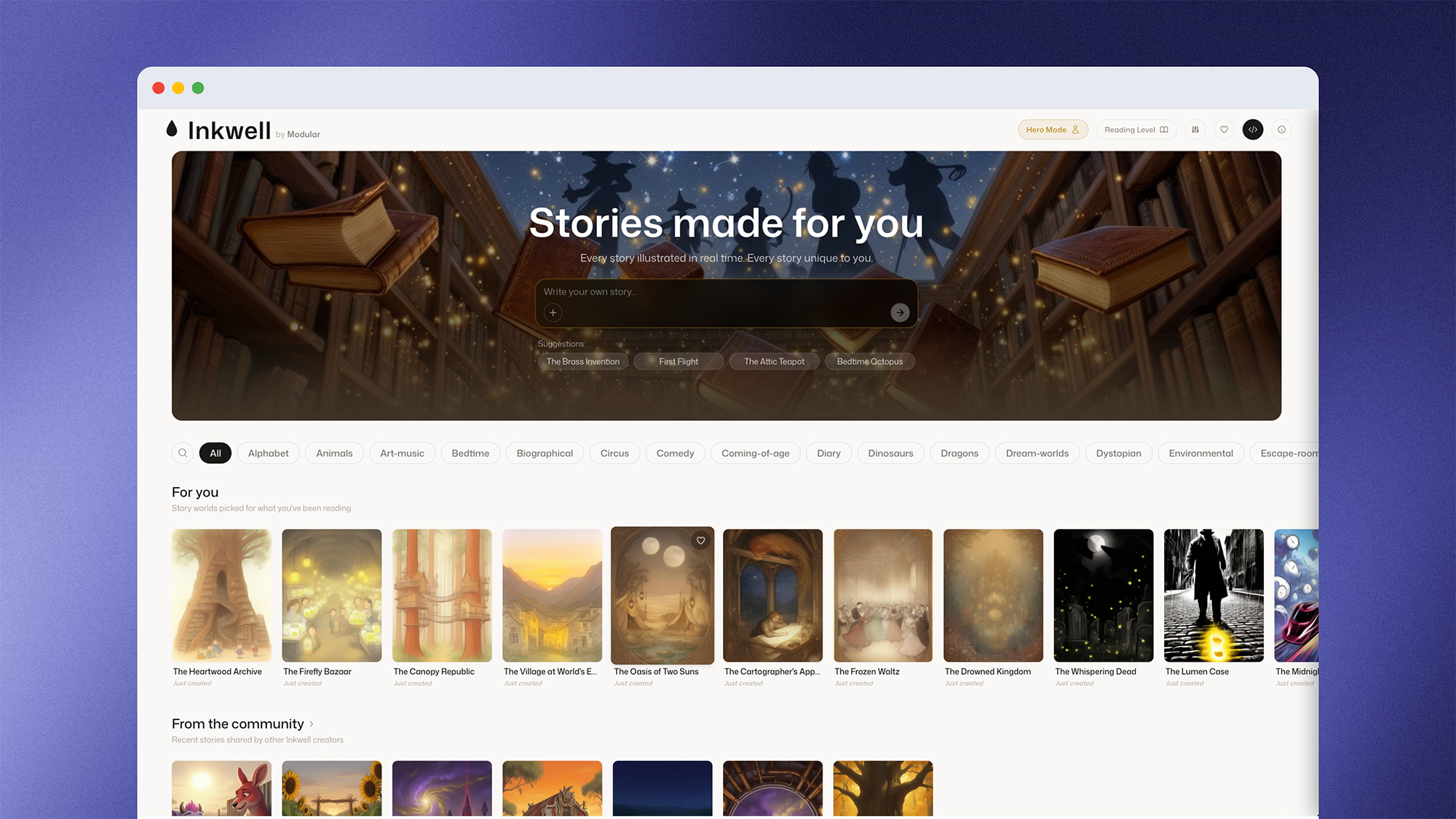

I have spent most of my career obsessing over latency in AI systems - first at Google, working on critical AI infrastructure, and now at Modular, building the inference platform that delivers incredible performance across NVIDIA and AMD hardware. We engineered Modular Cloud for the most latency-sensitive inference workloads: applications where text generation and image diffusion have to feel immediate, not eventual. I created Inkwell, a real-time illustrated storybook, as a way to share story time with my kids - and as a concrete test of what Modular Cloud makes possible.

Building an application around real-time image generation requires that the entire user experience feels immediately responsive. Content cannot be pulled from a stock library or pre-cached, but it also cannot be locked behind long wait times. Building on Modular Cloud’s Gemma 4 31B and Flux2 Dev 32B endpoints, Inkwell returns the first prose of a new page in 420 ms and a fully painted illustration in under 6 seconds. That performance is essential Inkwell’s user experience.

Inkwell: an app designed around high-speed diffusion

Inkwell is a web app that lets users create interactive storybooks with a custom character along infinite branching paths. When the user opens a story, the first page of text and image art streams in - text appears character-by-character via WebSocket within the first second, the illustration paints in as you read, and by the time you tap a choice, the next page is already written and illustrated. Creating a user experience around the seamless generation of new content requires an inference layer that can perform at scale.

The performance numbers

Measured over 24 hours of production traffic on inkwell.modular.com in May 2026

| Component | Provider | Measured P50 |

|---|---|---|

| Story text (~305 tokens) | Gemma 4 31B on Modular Cloud | TTFT ~420 ms 78 tok/s full page ~4.7 s |

| Illustration (1024 px, 25 steps) | Flux 2-dev on Modular Cloud | ~3.3 s |

| Choices (3 verb-led options) | Embedded in same Gemma call | Free (overlapped) |

| Cover thumbnail | R2-cached, regenerated on miss |

That 420 ms TTFT (time to first token) is what makes the rest of the design possible. The first content to hit the page needs to feel instantaneous, the art and the subsequent pages can stream in while the user reads.

Why TTFT determines your architecture

The central optimization in Inkwell is starting image diffusion before the text generation completes. Gemma's structured output for each page is a single JSON object with the sequential fields title → story → image_prompt → character_anchor → story_plan → choices. A streaming extractor watches the partial JSON and calls the Flux endpoint the moment image_prompt closes, around token 200 of 305. The LLM will continue streaming text for roughly 1.3 seconds. Flux's 3.3 seconds of work runs against that tail instead of after it.

This architecture only works if the inference provider has fast TTFT and sufficient steady-state throughput on long structured prompts. A delayed image_prompt response creates a bottleneck for both streaming text and image generation.

Prefetch and the 85% cache hit rate

Streaming overlap pulls first paint to 420 ms but the full page still takes ~5.9 seconds on a cold generation. A product defined by its responsiveness needs to drive this average down wherever possible.

In a linear story, this is addressed by preloading and caching the next page while the user reads the current page. When the user selects a “choose your own adventure” story, they are provided with three branching options, so the next page isn’t known. Instead of waiting for a user selection and then generating the next page while the user waits, Inkwell prefetches the three possible pages in parallel. Each prefetched generation carries the parent scene's image_seed, character_anchor, art_style, and story_plan ensuring visual and narrative continuity.

Based on traffic data, users read each page for around 7 seconds, which sits comfortably above the 5.9 seconds it takes those next pages to generate. When the user selects one of the three options provided (which happens in production around 85% of the time) the page turn takes 48 ms. The user only waits longer than that on the very first page of a new story, or when they write in a fully custom path that no prefetch could have anticipated. The average page turn the user experiences is dominated by the cache hit, not by the cold generation.

The importance of the inference platform

Three platform properties of Modular Cloud made Inkwell’s design possible, and they are the kind of properties that you only really notice when you have built against an inference platform that does not have them.

One API surface for both modalities

Both Gemma 4 31B and Flux 2-dev are exposed on Modular Cloud with an endpoint that is compatible with the OpenResponses API.

The provider abstraction is a single constructor call:

self.client = AsyncOpenAI(

base_url=settings.flux_base_url, # or llm_base_url

api_key=settings.modular_api_key,

)Swapping providers is a single environment variable change routed through a FallbackProvider wrapper:

class FallbackProvider:

async def generate_opening_page(self, prompt, **kw):

try:

return await self.primary.generate_opening_page(prompt, **kw)

except Exception as exc:

logger.warning("llm_primary_failed err=%s — falling back", exc)

return await self.secondary.generate_opening_page(prompt, **kw)The parser on the prompt-expand side doesn't know which provider answered. This eliminates the need for hundreds of lines of provider-specific glue.

Latency that makes the overlap design viable

On Modular Cloud, Inkwell has a 420 ms time to first token and a 78 tok/s steady state throughput on long structured prompts. This enables the overlapped generation of text and images outlined above. Because the LLM response includes the image_prompt for the diffusion model, slower providers caused cascading wait times for image generation.

Runtime footprint

Modular’s runtime is under 700 MB and the Inkwell backend image is around 220 MB total. On Cloudflare Containers (Firecracker VMs), smaller images mean faster cold starts. A 5-minute cron warmup ping keeps the stateless pool container from going cold, which at standard-1 idle pricing runs about $4 per week per environment. The smaller Modular container footprint meant cold starts were both faster and less frequent. In practice: cold starts that would have caused a visible delay on the first page turn instead resolve before the WebSocket handshake completes.

What comes next: streaming denoising

The largest remaining latency in Inkwell is the Flux call. Even with the LLM-overlap, the user waits around 3 seconds for the image to appear. The Flux API today only returns the final denoising step (step 25 of 25), even though steps 5, 10, and 17 already contain most of the perceptual signal: recognizable composition, locked color and lighting, emerging texture. Steps 22 to 25 refine details that are largely imperceptible at 1024px, but the user waits for all of it before seeing anything.

This is where MAX’s architecture matters directly. Rather than running each diffusion stage through PyTorch separately with memory round-trips between them, MAX compiles the full pipeline (DiT, VAE, text encoder, scheduler) into a single fused execution graph through MLIR. Every denoising step is optimized together, and that compiled pipeline is what makes it possible for Modular to implement server-side intermediate-emit: the steps are already executing as a coherent graph, not as a sequence of independent calls that would have to be re-stitched at the API boundary.

Modular is implementing that server-side emit now. The API will stream denoising steps as Server-Sent Events: {step: N, total: 25, image_base64: "..."}. The client crossfades frames as they arrive.

t = 0.4 s → step 5/25 (rough composition, subject recognizable)

t = 0.8 s → step 10/25 (color and lighting settled)

t = 1.5 s → step 17/25 (textures in)

t = 3.0 s → step 25/25 (final)

Combining streaming denoising with the prose typewriter means that every page becomes a multi-modal stream from the first second; text fills in across the page while the image blooms. It’s the same 3.3 seconds of compute, but the user is engaged from 0.4 seconds instead of waiting for the final reveal.

The pattern for low-latency user experiences

There is a consistent pattern across every optimization in Inkwell: don't wait for the complete response - stream the intermediate states as they come in and optimize time to first paint.

That includes streaming story characters to the typewriter as they arrive, extracting image_prompt from the partial JSON the moment it closes, prefetching all branching paths before the user selects one, and soon streaming the intermediate denoising steps from Flux while the user reads. Diffusion models have always emitted intermediate states internally; Modular is implementing the server-side emit now.

The inference platform's job is to make each of those patterns possible rather than require the application to work around them. The single biggest leverage point in a real-time generative consumer app is no longer the model, it’s a platform that can deliver faster responses and expose intermediate states. That is what Modular Cloud is built to provide, and those two properties are what make every optimization in this post possible.

Try Inkwell Yourself: inkwell.modular.com

API access for Gemma 4 and Flux 2: console.modular.com

Modular’s AI Model Library: modular.com/models

Request a demo: modular.com/request-demo

Discover what Modular can do for you